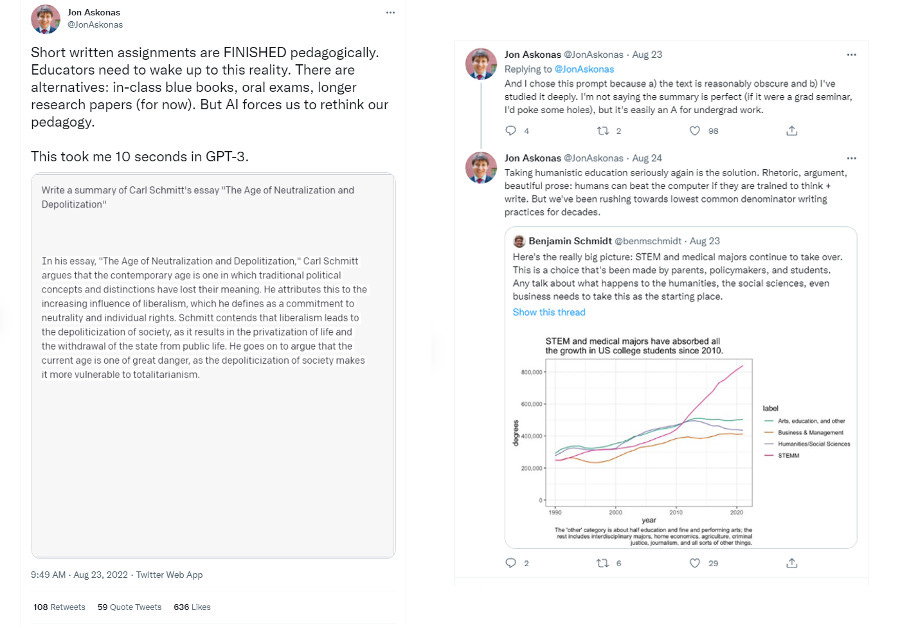

The hot topic of the moment on Higher Ed Social Media is people screaming at each other about student loan forgiveness, but the thing that I’m interested in talking about happened before all that. Specifically, it was prompted by this brief Twitter thread from a professor at Catholic University (which I’m posting in screenshot form below because his bio says he auto-deletes tweets and I want to preserve the possibility of this making sense to someone who stumbles across it later…):

My very first reaction to this is mild amusement because when I was at SciFoo back in June, Scott Aaronson repeatedly made the same comparison (though in less flattering terms). He noted that GPT-3 output tends to have the right general form for a paper, and uses most of the key terms in ways that are mostly grammatically correct, but if you know the subject matter, you can tell that it doesn’t reflect any real understanding. “So,” he said, “It’s effectively an undergrad.”

My slightly deeper reaction, as hinted at on Twitter, was that this fits with a general evolution of my thinking about issues around “academic honesty,” which is that we worry about this way too much and in unproductive ways. I think that the focus on preventing and catching cheating is ultimately pretty corrosive for the whole educational process, and while we may need to “rethink our pedagogy,” I don’t think it’s necessarily in the direction of eliminating the kind of short written assignments that you could imagine GPT-3 generated text getting a good grade on.

To the extent that there is rethinking involved here, the central question has to be “Why are you assigning students to do these particular tasks?” (Which, it should be noted, is a question we should be asking all the time, not something that’s newly prompted by the rise of machine learning…) Answers to this will span a range of possibilities, but the extremes of the spectrum are on the one end “Because it will help the students learn the material,” and on the other “Because it will help the professor assign a grade.”

The position that “short written assignments are FINISHED pedagogically” only makes sense if you’re coming at the assignment from somewhere well toward the “assign a grade” pole. If your primary concern with a particular assignment is to winnow out the chaff who didn’t do the reading and rank-order the wheat who did, then, yeah, GPT-3 is an extinction event. Unethical students can absolutely use one of these text generators to score well without actually doing the assigned reading, and if you prioritize grades above all else, this kind of assignment will only be useful under panopticon conditions.

If, on the other hand, the point of the assignment is that doing it will help students learn the material— that distilling an essay on political philosophy down to a one-paragraph summary will force them to clarify their own thoughts in a useful way— then there’s no reason to stop using them. The fact that unethical students can skip past the assignment with the aid of something like GPT-3 doesn’t mean that the ethical students won’t benefit from going through the exercise. And, ultimately, that’s the whole point of the enterprise of higher education: to provide a framework in which students who care about a subject can, with a bit of effort, learn and grow and come to understand a subject in a deeper way.

Now, this is almost certainly an area where, in keeping with the emergent theme of this week’s prior posts about academia, my perspective is strongly influenced by my disciplinary background. I’m a professor in the physical sciences, where every question we might put on an assignment has a single correct final answer, and a fairly limited number of ways to arrive at it. Solutions to these can readily be aggregated, and have been available to be copied by unethical students in a variety of media for decades. Nigh on thirty years ago when I was in grad school there was a shady samizdat book floating around that collected detailed solutions to all the problems in Jackson’s infamous E&M text, and these days the solution manual for any textbook you might care to name can easily be found online. The ship has sailed, the horse has left the barn, the cat has been unbagged.

That doesn’t stop me from assigning students problems out of the textbook, though, because getting a good grade for arriving the correct final answer is not the ultimate point. The point of the assignment is that grappling with the problem and working through the process to get to the solution is the best way to learn the material. The students who actually care about the subject know this, and will put in the effort even though they could easily find the solution online. And those are the students we ultimately should care most about.

“Yes, but what about the students who didn’t do the work and just copied the answer?” Well, what about them? They’re not learning anything, sure, but the only people they’re really hurting by that are themselves— at some point down the road, the fact that they didn’t learn what they were supposed to learn is going to bite them in the ass. Usually sooner than later. Catching and punishing cheaters in the moment does not, to me, seem like a high enough priority to degrade the experience for the honest students (and for me) by putting in the kind of onerous measures that would be necessary to have a high degree of certainty that malefactors will face punishment.

And coming at the design of course assignments from a perspective that prioritizes catching cheaters feels to me like starting the student-faculty relationship in a very bad place. The educational process requires a certain amount of trust, and heavy-handed anti-cheating measures send a message of distrust to the class that I think is ultimately counter-productive. I’d much rather start from a presumption of positivity: “We are here so I can assist you in learning this material, because we both care enough about the subject to invest some significant effort in that.”1

This is not to say that I don’t do anything to try to catch students who aren’t doing the work they need to do— I write some of my own homework questions so they’re not all from the book, I make sure the exam questions aren’t identical to textbook problems, I try to include enough active engagement in class that I have an idea of what each student’s level of understanding is before I sit down to grade their work. I make use of assignments that students could cheat on in places where it makes sense to do that, but they’re not the sole determinant of students’ grades.

And, if we’re being honest, the cheaters, like people who commit crimes in other walks of life, are generally not choosing this path because they have the ability that would be needed to do it well, so they’re usually not hard to spot. They’ll copy a solution that uses a technique not covered in the course, or blindly copy a solution with an error in it, or introduce an error by copying something down wrong. The extra return of doing invasive proctoring and the like to get the tiny fraction of cheaters who won’t be stupidly obvious about it just isn’t worth the hassle either for me or for the students who are going about this honestly.

And this basically works, at least in majors courses. I started doing “self-scheduled” exams in sophomore modern physics a year or two before the pandemic, allowing students to choose a three-hour window within a span of a few days in which to complete the test and upload their solutions to the Moodle site, and didn’t see any evidence of significant problems. I didn’t get answers to the exam problems that seemed dramatically better than I would’ve expected given a student’s level of participation in class or their performance on homework, and I didn’t find the clusters of odd answers that come with excessive cooperation between classmates. As far as I could tell, they approached the self-scheduled tests as honestly as they approached in-class exams with live proctoring.

More broadly, the ultimate issue is that, by and large, the students who are taking dishonest shortcuts aren’t actually interested in the subject of the course in question. This is again largely a self-correcting problem— if they get through the course, they’re going to go away and do something they actually do care about. In extreme cases, they may not be interested in college at all, and are just doing it out of some vague sense of obligation and putting in the absolute minimum possible effort. (This is a very small fraction of the population, though.)

The solution to both of these, though, seems to me to not put students in that position in the first place. To the largest extent possible, we should give students enough agency so that they’re not being forced to take courses they’re not interested in just to check a box on a form. This won’t always be possible, particularly in fields that are very hierarchical, but in that case it’s on us as faculty to make clear how the thing they’re bored by relates to and is essential for the later stuff that they will care about. And beyond that we should have more and better options for those students who are not currently interested in any academic pursuits so they don’t feel obliged to take up space in our classes.

So, I don’t think GPT-3 is necessarily the death of the one-paragraph essay, any more than the existence of Chegg.com is the death of the textbook-based homework assignment. It might be the death of them as vehicles for lazy grade-based “pedagogy,” but there’s no reason to stop using them in places where they’re genuinely useful exercises for students who approach them honestly. Because in the end, the students who will benefit most from them will approach them honestly, and those are the students we should care most about when we think about designing our courses.

The title I used is a reference to a rant from last summer, though in the end I didn’t see a way to mention it in the main body of the post. If you like this sort of thing, here’s a button:

And if you’d like to cut and paste somebody else’s passionate argument for the public flogging of students who plagiarise homework, the comments will be open:

My first-day-of-class anti-cheating speech includes something along the lines of “I do not know in detail how our current honor code procedures work, and one of my career goals is to retire without knowing how our honor code procedures work. Please do not make me learn how our honor code procedures work.” Said honor code has been in place for something like ten years, so I’m doing well…

And, ultimately, that’s the whole point of the enterprise of higher education: to provide a framework in which students who care about a subject can, with a bit of effort, learn and grow and come to understand a subject in a deeper way.

That's a pretty... naive belief in the purpose of education in general and higher education in particular. In high school, I had to take plenty of classes I did not care for (scientific ones) - and perform - because failure to do so would impact my academic career and professional life thereafter.

Higher education serves as a stamp of approval for validating who, in our societies, "deserves" a shot at upper middle class lifestyle/professional status.

And, while it may be true that, in scientific fields, "the fact that they didn’t learn what they were supposed to learn is going to bite them in the ass", there are plenty of fields where you can bullshit for a long time. A professional job in corporate America does not, in practice, truly require a degree. But companies do rely on a degree to denote raw intelligence and work ethics. And those 2 qualities are pretty relevant to most jobs. So I can see why people would be upset with cheating.

I've written about GPT-3 before and basically there are going to be a lot of the more naive/less attentive faculty freaking out in a year or two when they start to understand what has happened (or more likely, understand only partially). Anybody who is still using writing just as something students do to prove they did the homework should just come out with their hands up, it's game over. But they shouldn't have been doing that in the first place. Because not too long from now the successor to GPT-3 is going to be able to put some form of a real-time API call or something of the sort to Wikipedia etc. and actually get the basic content sort of right as well as the form.

What we need to start doing with writing (and I think other expressive forms) is teaching students how to invest in developing a distinctive voice and style as well as learning content that isn't in a wiki or other knowledge database. Essentially how to be more distinctively human. At the same time we need to start honestly teaching students how to usefully direct AI engines to produce the best outcomes--you've probably see that the art-based AIs produce the best stuff when someone has a really specific image in mind and has a great descriptive vocabulary that the AI can work from.