The Cult of G.E.N.I.U.S. Pt. 2

A Speculative Exploration on the Possibility of a Full-Stack, AI Architect

Part 2: Setting up G.E.N.I.U.S.

In this Post[1]

Teaching the A.I. my Design Style

Teaching my A.I. to Play Well with Others

Having the A.I. Interview its First Client

Personalizing the AI:

To create an AI Architect and set up G.E.N.I.U.S., the first thing I would want to do is create a digital version of myself. Chat GPT and other large language models (“LLMs”) are trained on a global corpus of text. If I ask it to respond as an architect, it will, but it’s synthesizing everything it knows about architects, generally, and it probably doesn’t know anything about me, in particular.

Emerging startups have begun to tackle this in the form of personalized AI. These AIs aren’t trained on the Encyclopedia Britannica or the internet – they ‘train’ on my documents, my writings, my emails, etc. So they respond like . . . me. They also have additional, relevant training that you would expect from an LLM, so they will exhibit that ‘intelligence’ that Chat GPT appears to have. G.E.N.I.U.S. will eventually be a suite of solutions, and a team of AIs, but all fundamentally based on a personalized AI, based on Human Eric. When G.E.N.I.U.S. speaks, it will be as if I am speaking, but imbued with the intelligence of Chat GPT, which I’m sure will be a potent combination.

Microsoft 365 Copilot appears closest to debut. The program promises to integrate all data from all Microsoft software and data that I already use. When I give it a request, it incorporates all of my existing Powerpoints, MS Word Documents, emails, chats, my Outlook Calendar, etc. with an LLM (probably GPT 4 or GPT 5). So when I give it a request like ‘Please set up a meeting with a potential new client, John Johnson’ it will go through all my documents (or all documents for which I give it permission) and execute that task. Since 365 Copilot is not out yet (expected Summer 2023) we can’t say for sure what it will and will not do. However, based on the claims, some of its steps might include:

Scheduling the Meeting

Going through my emails to determine which contacts should be included in a first meeting with John Johnson. Given it’s contextual awareness, it might select someone from my marketing team, or my studio director, or just myself.

Sending invites to potential invitees to understand their availability. Hopefully, they have a personal AI, too, that can respond on their behalf. That way there’s no lag while someone is checking their calendar.

Confirming the meeting based on everyone’s availability, and scheduling it

Preparing for the Meeting

Preparing a briefing packet to help me get ready for my meeting

Surfacing any prior communications with John Johnson, and distilling them to understand what Johnson’s potential as a client might be.

Scan the web for any recent news about John Johnson that might be relevant to the meeting

Scan social media to see what John Johnson has been posting about.

Using my email, gleaming any details about what kind of project Johnson is thinking about. It then might compare that information against my past projects by going through my files, and surface examples of past projects that align well with what Johnson is considering. It would add any information found on the web about John Johnson and use it to help inform the analysis.

Using my Powerpoint files, prepare a presentation deck that features those past projects, as well as other information about my firm and why I rock. Because it’s iterating off my files, it will be reflective of my brand, feature my logos, etc.

Using all data collected so far, prepare a script and/or set of talking points for me to use in my pitch to Johnson.

Scheduling a rehearsal time so that I can review the briefing packet and practice my pitch

Offering feedback on my delivery, and altering the presentation accordingly.

Consumer-facing AI is generally trending towards personalization. Google has also announced plans to implement similar features across Google Docs, Gmail, etc. Whichever softwares you use, it is likely that your future AI avatar will know how to use them, how you use them, and all the knowledge contained within all your files in all those programs.

Teaching the A.I. my Design Style

As noted in the article, research has been going on for decades in the field of shape grammars. In the article, I detailed the work of José Pinto Duarte, the Stuckeman Chair in Design Innovation at Penn State University and his team, who have been pioneering the development of ‘shape grammars’ for particular architects and ‘generic grammars’ that include the styles of many architects. By analyzing the existing plans of existing architects, one can develop spatial algorithms that reflect an architect’s particular style and aesthetic.[2] In various forms, the technology exists to analyze the plans (and other drawings) of any particular architect and develop an algorithm to mimic that design approach. Admittedly, it does not integrate all of the fuzzy, subjective components of design. The technology is shape based, and understands the world through that geometry, which means it mostly focuses on plans. It doesn’t understand my proclivities towards a particular material, for instance, but there are other ways to integrate that as well. Simultaneously, researchers have made progress in ‘codifying’ artistic styles in the fine arts[3], as well, which is now why you can get Midjourney AI to conjure you an image of Godzilla in the style of Van Gogh.

Midjourney (through a human proxy) recently won the Sony World Photography Award, although the prompter subsequently refused to accept the award. And to round out its artistic year, an AI version of Drake and an AI version of The Weekend recently collaborated on a new song which made the rounds on Spotify until it was taken down under copyright threat. The artistic capabilities of AI seem to be just getting started.

I’m certain that I just lost some readers. There will be some readers who feel that their design style is so unique, and creative, that it could not be imitated by any machine. If someone is married to that conviction, there’s not much I can do to convince them, although I’ll be trying in this series. For the skeptics, I would consider it like an asteroid problem. If I told you that there was a 5% chance that an asteroid was going to hit your city in the next 12 months, you’d probably do something. So, even if you think there’s a 95% chance that I’m wrong, maybe it’s still worth taking a look at your practice and asking yourself some tough questions about how all of this will affect you.

Teaching my A.I. to Play Well with Others

As of now, G.E.N.I.U.S. is just a brain in a jar. From here, I would need to find a way for G.E.N.I.U.S. to interact with others. Let’s start with my staff. I’m not sure whether I will have or need staff in the future, but for now let’s assume that I have a team that periodically needs to ask me questions.

Programs like Ingestai.io do something similar to Microsoft 365 Copilot, but aren’t restricted to Microsoft products. It works with whatever applications and files I give it. It can survey my existing data on platforms like slack, WhatsApp, Discord, Amazon, etc, as well as any physical files I upload. We feature it here because with Ingestai.io, I could set up a Chatbot on my company’s Slack or Discord. That bot could then answer any questions that might come from my staff. If my staff had a question like ‘When are we starting the John Johnson project’ it would answer them based on all the information gleamed from my files and applications. It wouldn’t know all that I know, obviously, but the more of my data it has, the better it is at imitating responses in my voice. It could also answer questions externally, from clients, subcontractors, etc. Presumably, if I uploaded every application I’ve ever used, and every document I’ve ever written, and all digital information that exists about me, it would be pretty good at answering questions in my voice. However, this raises obvious privacy concerns which we will all have to figure out together. For the purposes of this exercise, let’s assume that I don’t care about my own privacy, and give G.E.N.I.U.S. everything I have. All my emails, my Spotify playlists, the contents of all my harddrives, my 7th grade history paper, etc. That will ensure that G.E.N.I.U.S.’s performance adheres as closely to mine as technologically possible.

Having the A.I. Interview its First Client

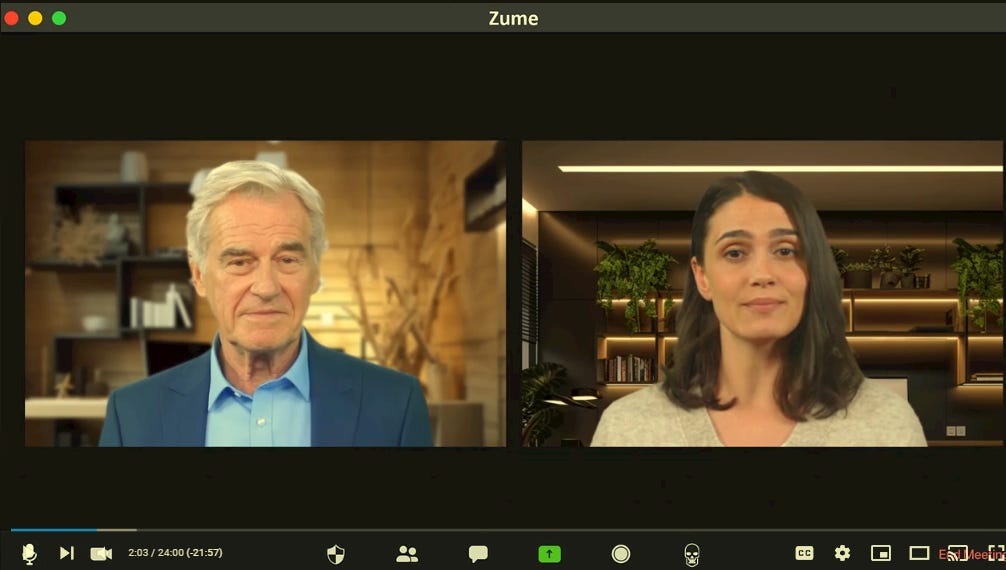

As I demonstrated in the video, Chat GPT and GPT can already do this. The difficulty would only be in sufficiently programming an interface with my personality, dispositions, and architectural approach. LLMs are capable of natural human language, and even sophisticated, technical human language. Once personalized AI becomes ubiquitous, they’ll have “Eric” language as well.

For interviewing clients, I might use something like Ingestai.io and interview the client via a chatbot. However, since the API for GPT 4 is open for developers, I could also just build my own custom chat bot. Or just use a premade service, like Chatbase.co.

If I wanted a real time, video avatar, as shown in the video, I may have to wait a few years. Companies like Synthesia.io or Hour One can build me my own custom video avatar for a modest sum, but it won’t be real time. It will just read off scripts that I input, using my face and voice. Creepy! Real time video avatars do actually exist today, but we’re still waiting on quality real-time video avatars – could be a few years or a few weeks. The current ones are kinda terrifying. On the plus side, a digital avatar will open up expansive new markets, because it allows G.E.N.I.U.S. to be an architect in any language. G.E.N.I.U.S. could simultaneously interview clients in Spanish, Portuguese, Mandarin, etc., even if Human Eric doesn’t know any of those languages.

Regardless of which technology I use to interview the client, I would use an LLM in a model similar to what I used in the video, where the LLM is programmed to ask questions from the standpoint of an architect. Assuming it’s tied to one of the programs identified previously, it would ask questions that I would ask, not just the typical questions that a typical architect would ask.

The objective would be to conduct an interview so that the necessary information is extracted from the client. How much information depends on one’s approach as an architect, the complexity of the project, and the personality of the client. Different architects have different approaches to interviewing clients, and different ways of assimilating the answers. Given that G.E.N.I.U.S. has been trained on my past work, I can expect that it will ask questions in the way that I do, but with some advantages we are only beginning to understand. For instance, Rondinelli Morais, a Brazilian computer scientist has recently developed a real-time emotion detection model to evaluate the faces via video. The expressions are evaluated based on a wheel of emotions, indicating what mix of happy, angry, bored, etc. a subject is feeling in the moment. For any architect who has ever struggled with ‘reading’ a client, or had a client that had trouble expressing themselves, G.E.N.I.U.S. now has that problem solved. G.E.N.I.U.S. can pursue more details when a client appears pleased, or change directions when they seem bored, etc.

However long it takes to set up the process, I remain mindful that this is the last labor I’ll perform in this phase, ever.

Once the LLM is set up with a video avatar or chatbot, it will be able to interview a hundred clients a day, or a thousand, indefinitely. Moreover, it will record all of those interactions and learn from them. In the beginning, I would want to build each interview into a training set for a machine learning model, so that G.E.N.I.U.S. could take notice of interviews that went well, and those that didn’t, and improve its own technique.

Check In: Once the client is properly interviewed, G.E.N.I.U.S. should now have sufficient information to build out a design brief. I realize that every architect has a different definition of what’s considered ‘sufficient’ in this process. However, it’s customizable, based on the personality of the architect involved, project type, etc. ‘Interviewing the Client’ may in fact mean interviewing many clients. If you’re designing a company headquarters, you may want to interview all the members of the board and senior leadership, or the employees, or the customers. How do we make such determinations?

We’ll figure that out as we continue with “Part 3: An A.I. Firm Strategy” which you can read now, and then Parts 4 & 5 “Schematic Design” on Friday. Subscribe below to be instantly notified.

[1] Reading Notes:

1. “GPT” refers to both Chat GPT and GPT 4 since both were used in the construction of the model. Chat GPT and GPT 4 have different capabilities, and wherever the use of one was specific, it is noted as such. Wherever “GPT” is used, it could refer to either Chat GPT or GPT 4.

2. References to ‘the article’ refer to “In the Future, Everyone’s an Architect (and why that’s a good thing)” parts 1 and 2, available on Design Intelligence. If you haven’t read them, you should probably begin by doing so, as they give important context to what follows.

3. References to the ‘technical addendum’ refer to the previous technical addendum to “In the Future, Everyone’s an Architect (and why that’s a good thing” which was written to provide a detailed record of how the original AI architect/client exchange was developed.

4. References to ‘the video’ refer to the video included in “In the Future, Everyone’s an Architect (and why that’s a good thing),” which is also available on my youtube.

5. I developed the initial language model on March 12th, 2023 and developed the video in the days after. I submitted the article for publication on April 7th, 2023. The article was published on April 26th, 2023. The speculations are current as of date of publication, however, I expect them to be rapidly outmoded. I’ll be updating my substack periodically to track new developments at the intersection of AI and architecture, but will leave these articles in their original form.

[2] Prof. Duarte’s initial experiments were developed on the work of Álvaro Siza, and his Quinta da Malagueira Housing Scheme, wherein Prof. Duarte taught a machine learning program to read and interpret the spatial grammar of Siza’s designs. The program was so faithful to Siza’s approach, that when Siza was confronted with the algorithmic-generated designs, he couldn’t distinguish between which ones he had designed, and which ones had been designed by an algorithm based on his work.