This one’s for a subscriber, who wrote:

Hi, Julian! I really appreciate and enjoy what you are doing and posting. It often gives the right ideas or answers on the issues I am struggling with. So, please keep doing! On topics, I personally would love to hear your tips and tricks on the rubric development process together with stakeholders. Especially in context where stakeholders have very little understanding and experience in evaluation.

First up, thank you! It is so very heartening to receive this feedback, and to know you’re interested in learning more about this.

In my experience, and the experience of my colleagues, the process of co-developing and using rubrics is itself an evaluation capacity building exercise. Through this process, stakeholders gain an understanding of evaluation and first-hand experience in taking part. This helps the immediate purposes of the evaluation by increasing the likelihood that findings will be understood, endorsed, and used. It also helps the next evaluation as stakeholders (hopefully) had a positive evaluation experience and should be better-informed about what to expect and how to engage in conversations about value.

Quick backgrounder for the uninitiated

You can think of a rubric as a matrix of criteria (aspects of performance) and standards (levels of performance). Rubrics are a co-constructed set of lenses to help make sense of evidence and judge the value of something. Here's an example.

Yes, yes… but why?

Investing in rubric development brings a number of benefits to an evaluation:

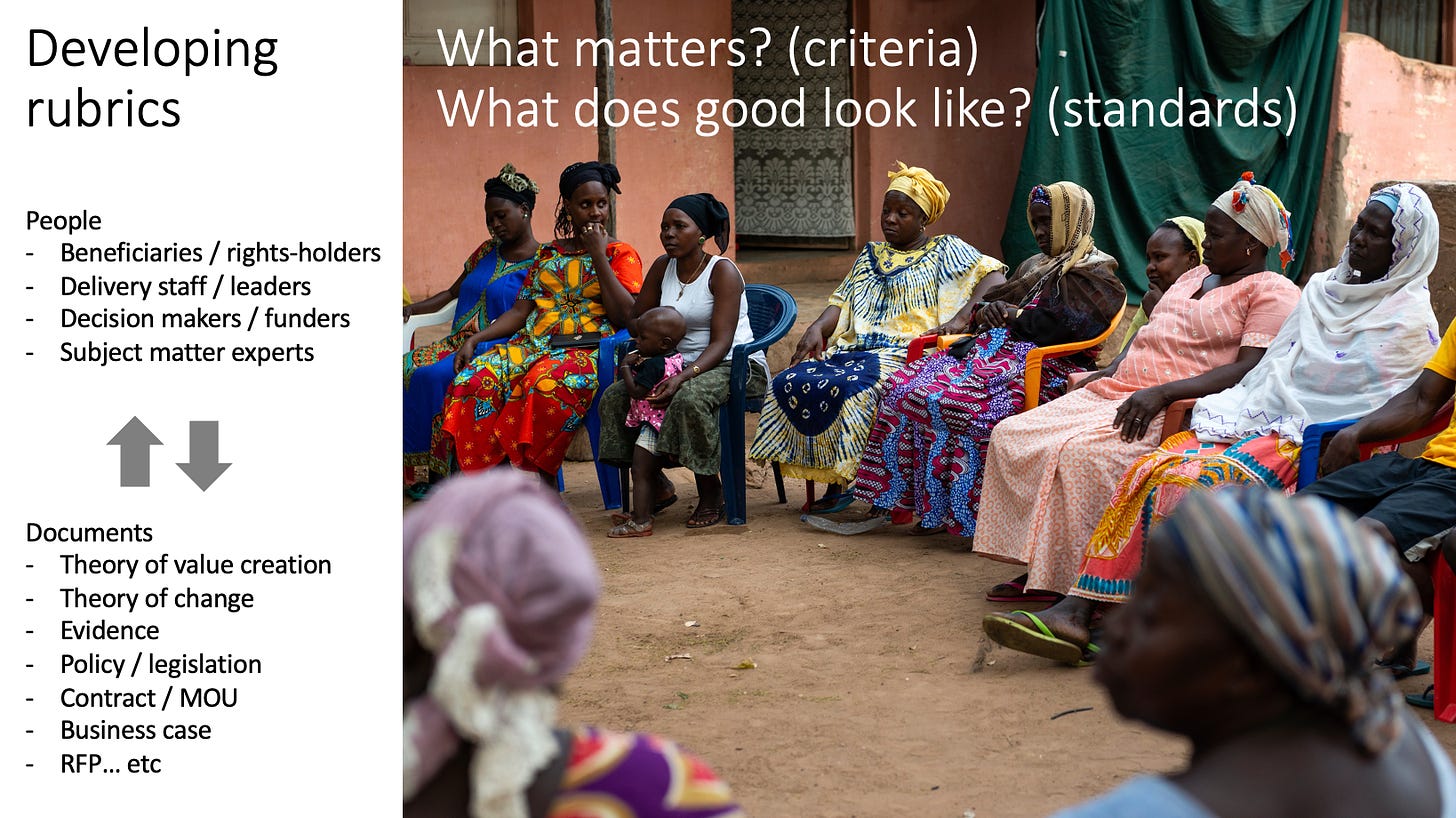

Creating the space to engage stakeholders in thinking explicitly about value - what matters, and what good looks like

Aligning the evaluation to the program’s value proposition, design, and context, so it focuses on things that actually matter

Selecting appropriate methods and focusing them on the right pieces of evidence

Organising the evidence by criteria, making it efficient to analyse

Providing an agreed set of lenses for interpreting the evidence and judging performance, success, quality, or value

Outlining a reporting structure for sharing key findings in a clear and compact way, that isn’t torture to read

Making the reasoning process transparent by ‘showing your working’.

Okay… but how?

The basic steps in rubric development are:

Identify the aspects of performance, success, quality, or value that matter. These are the criteria.

Define levels of performance. These are the standards. Examples are excellent, good, adequate, and poor; or excelling, embedding, evolving and emerging.

Define what each criterion would look like at each level of performance.

This approach is flexible and needs to be tailored to context. While this flexibility is a strength of the approach, it also makes it hard to generalise about how rubrics should be developed. Therefore, the following tips are guidelines, not rules. I’ve adapted these tips from OPM’s approach to assessing value for money - a guide.

Bring the right people together

You shouldn’t sit alone in your evaluator cave and make a rubric. If you do, it’ll be based on your assumptions. What we need is a rubric that articulates what a relevant group of people collectively value about a policy or program. So we need to identify who those relevant people are, and find out what matters to them and what ‘good’ looks like from their various perspectives.

So, who needs to be at the table? Jane Davidson offers the following considerations:

Validity – Whose expertise is needed to get the evaluative reasoning right? As well as a lead evaluator with expertise in evaluation-specific methodology, it will be necessary to include people with expertise in the subject matter; in the local context and culture; and in interpreting the particular kinds of evidence to be used.

Credibility – Who must be involved in the evaluative reasoning to ensure that the findings are believable in the eyes of others?

Utility – Who is most likely to use the evaluation findings (i.e., the products of the evaluative synthesis)? It may be helpful to have primary intended users involved so that they can be part of the analysis and see the results with their own eyes. This increases both understanding of the findings and commitment to using them.

Voice – Who has the right to be at the table when the evaluative reasoning is done? This is particularly important when working with groups of people who have historically been excluded from the evaluation table such as indigenous peoples. Should programme/policy recipients or community representatives be included? What about funders? And programme managers?

Cost – Taking into consideration the opportunity costs of taking staff away from their work to be involved in the evaluation, at which stages of the evaluative reasoning process is it best to involve different stakeholders for the greatest mutual benefit? (Davidson, 2014, p. 8)

Use participatory processes

OK, so we’ve brought the right people to ‘the table’ (it might not actually be a table - it could be Zoom room, a series of smaller meetings, an email chat)…

Now what?

You can generally expect to draw from documentation/literature, so it’s good to come prepared and well versed in relevant documents such as a business case, theory of change, relevant policy/legislation, service contracts, etc. These documents are likely to contain requirements, expectations and topics that can serve as conversation starters. Rubrics will draw inspiration from these documents. They may also have to align with parts of them.

Rubric development is iterative and may involve progressing through several drafts. Taking the time to reach this agreement is “an early investment that pays dividends throughout the remainder of the evaluation”. Through their participation in this process, stakeholders become engaged in the evaluation design, see their expectations represented in the criteria and standards, and understand the basis on which judgements will be made.

If there are diverse viewpoints and values, it is desirable for stakeholders such as decision-makers, delivery teams, and communities to have transparent discussions about these early on, before the evaluation is conducted, with a view to reaching a shared understanding of what matters - or at least to acknowledge and accommodate different perspectives.

Running this kind of participatory process requires skilled facilitation to surface different viewpoints and ensure everybody’s voice is heard, while at the same time introducing a structured way of thinking about value that may be unfamiliar to stakeholders. It can help to warm up the group first by taking them through a simulation of the process, using a relatable scenario like developing a rubric for choosing granny’s new car, or for rating a dining experience.

There are infinite ways to approach this sort of participatory process. It’s up to you and your stakeholders to come up with a process that fits the context. To illustrate, some of the rubric development processes I’ve been involved in, with various colleagues, have included:

A two-hour roundtable with approximately 15 stakeholders representing diverse viewpoints including mental health service users, psychiatrists, nurses, planners and service managers… followed by another two-hour workshop two weeks later… and a third… till we had a rubric that everybody endorsed. The rubric was developed in real time, using a laptop connected to a projector.

A half-day workshop with about 20 scientists, sticking post-it notes to a window. We took photos of the notes, retreated to the evalcave, and returned with a draft rubric that we all refined together.

A full-day conference of over 100 doctors and practice managers, including plenary and break-out sessions to develop different criteria and standards. High stakes, nerve-wracking fun!

A series of individual interviews with key stakeholders, after which I drafted up a rubric and presented it back to them in a workshop for refinement and validation. The funder was not available to take part in these processes so I met with them subsequently to present the penultimate draft and invite feedback.

During the first year of a multi-year evaluation, we focused a stakeholder engagement cycle on identifying what matters and what good looks like, culminating in rubrics which we applied in subsequent years to evaluate footprints of system change.

A couple of evaluation team members developed tentative criteria from the program’s theory of change, followed by an online meeting with stakeholders to debate and refine them.

A client developed their own strawman rubric and emailed it back and forth till the wider stakeholder group and evaluation team arrived at an agreed version.

At a minimum, take the time to ensure key stakeholders understand and support the approach and the basis for making judgements, even if they are not present during rubric development. The evaluation is more likely to be accepted and considered valid if all the evidence and reasoning is traceable, open to scrutiny, and linked to criteria that were developed (or at least discussed) with stakeholders at the start.

Suspend conversations about measurement

The purpose of rubrics is to describe what matters (criteria) and what good looks like (standards). They aren’t indicators. They may be quite broad descriptions of the intended functioning and effects of a program. While indicators are specific and measurable, rubrics describe the nature of performance, success, quality or value that’s intended.

This can cause a bit of discomfort for some participants, and a question is likely to arise, along the lines of “but how are you going to measure that?”

During rubric development, it’s important to 'park' conversations about evidence and measurement. After the rubrics are developed, the conversation can turn to identifying what mix of evidence is needed, and will be credible, to support the evaluation.

This is in line with a principle that’s supported in economic evaluation: Infeasibility of data collection does not mean irrelevance of data. First work out what we need to know in order to inform a judgement, then consider whether we can get that information. Any information gaps are addressed in the discussion of uncertainty.

Tailor to context

Good criteria are specifically tailored to the program – and often relate to a particular point in time (i.e. what would 'good' look like at the time of the evaluation). This means that the definitions of criteria will be different for each program. Even if you use a given set of criteria, such as the OECD DAC criteria, the generic criteria should be defined in terms that are specific to the program and context.

Keep it simple

Rubrics need to be specific enough to guide meaningful judgements, yet not over- specified to cover every contingency. Remember, you’re not writing a computer algorithm to make judgements for you. You’re not writing a piece of legislation that has to be free from loopholes. The rubric is just a guide to support transparent, focused reasoning. Rubrics don’t make evaluative judgements - people do!

Thanks Julian - a nice summary. I do love a good rubric. The comment that the content of rubrics are not indicators is a good reminder - it's really hard for time-poor providers to park this, as they often want to make best use of the data they already have or are mandated to collect.

It would be great to hear your thoughts on what is often the next step - synthesizing all this into an evaluative judgement. Not all providers I've worked with want this, but some do.