Efficient Mixtures of Experts with Block-Sparse Matrices: MegaBlocks

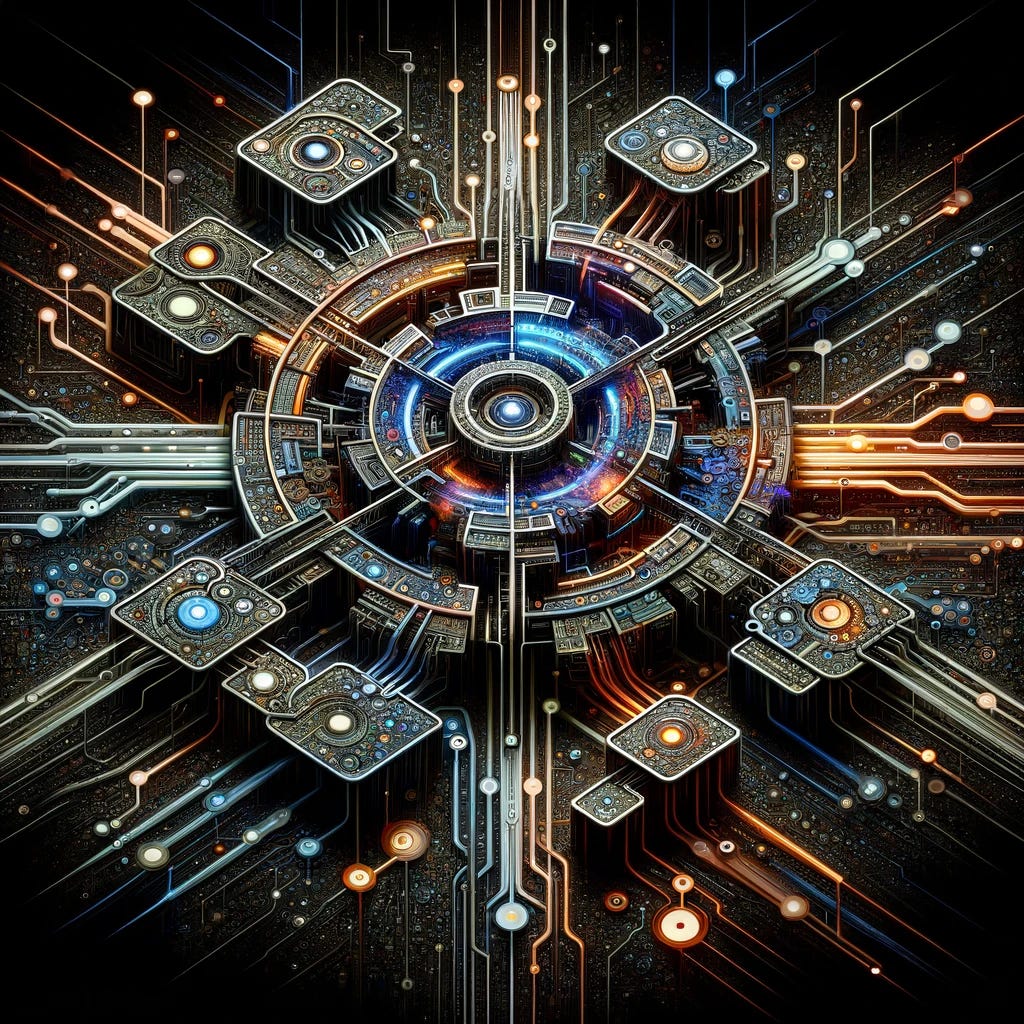

A new compute paradigm makes sparse Mixtures of Experts more scalable than ever before

The sparse Mixtures of Experts (MoE) algorithm has been a game-changer in Machine Learning, allowing us to scale up modeling capacity with almost constant computational complexity, resulting in a new generation of LLMs with unprecedented performance, including the Switch Transformer, GPT-4, Mixtral-8x7b, and more. Really, we’re just starting to see the …

Keep reading with a 7-day free trial

Subscribe to Machine Learning Frontiers to keep reading this post and get 7 days of free access to the full post archives.