The Loss-in-the-Middle Problem in RAG🙀

Did you know that as smart as advanced models like GPT, when faced with questions involving extensive context, can sometimes struggle to provide accurate responses?

In the past year, applications like "Chat with Your PDF" have gained popularity across various industries. Many developers of these apps leveraged the power of GPT or other leading large language models. Example: the Castly app.

Despite their popularity, these solutions weren’t perfect.

Customers often have diverse requirements that a simple API call may not address adequately. For example:

Can your Chat2Doc tool function with multiple documents?

Is it capable of retaining a chain of conversation along with one document?

Does it handle very lengthy documents effectively?

Can it extract tables from PDFs?

The list of questions goes on.

In today's discussion, we'll address a specific challenge related to dealing with long documents that yield numerous retrieved results. If you're interested in learning how to extract tables seamlessly from PDF files, check out our latest post: 👇 As for the other challenges you may be facing, we'll delve into those in future posts!

What’s the problem here?

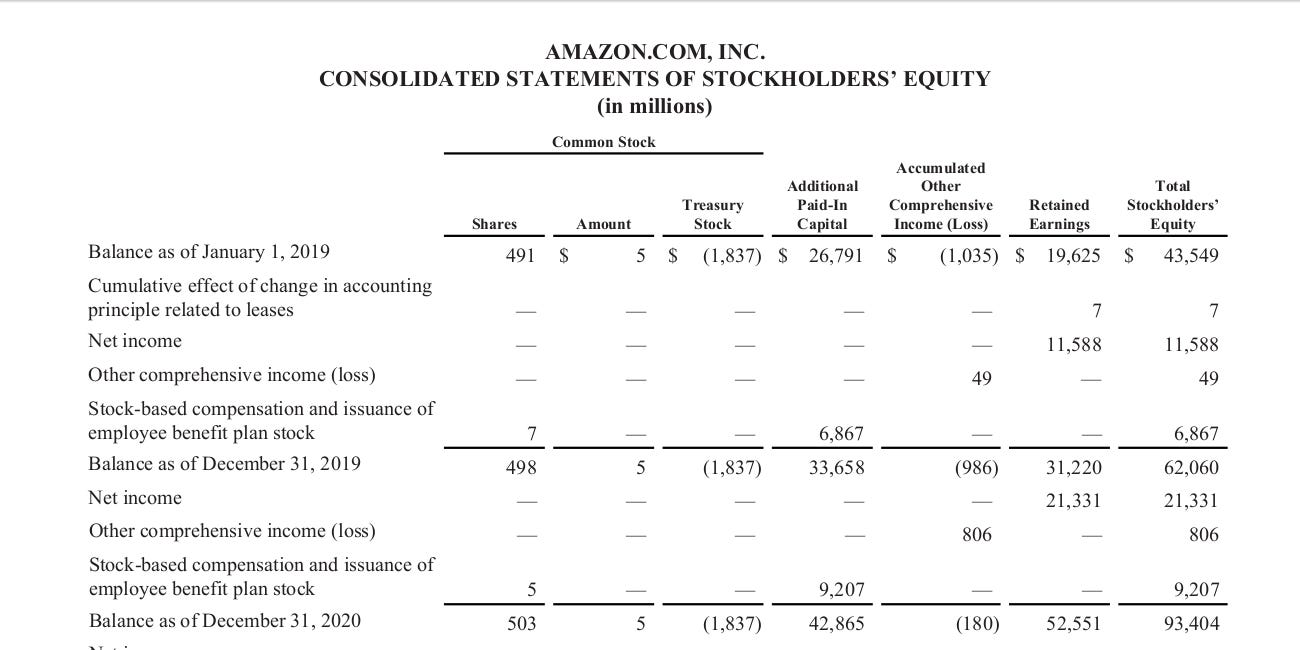

N. F. Liu et al. (2023) in their paper “Lost in the Middle: How Language Models Use Long Contexts” demonstrated interesting findings about behavior of LLMs.

The study revealed that the performance of LLMs is generally optimal when pertinent information is situated at the beginning or end of the input context. However, a noteworthy decline occurs when models need to retrieve relevant information embedded within the middle of extensive contexts.

What are suggested solutions?

It’s surprisingly simple.

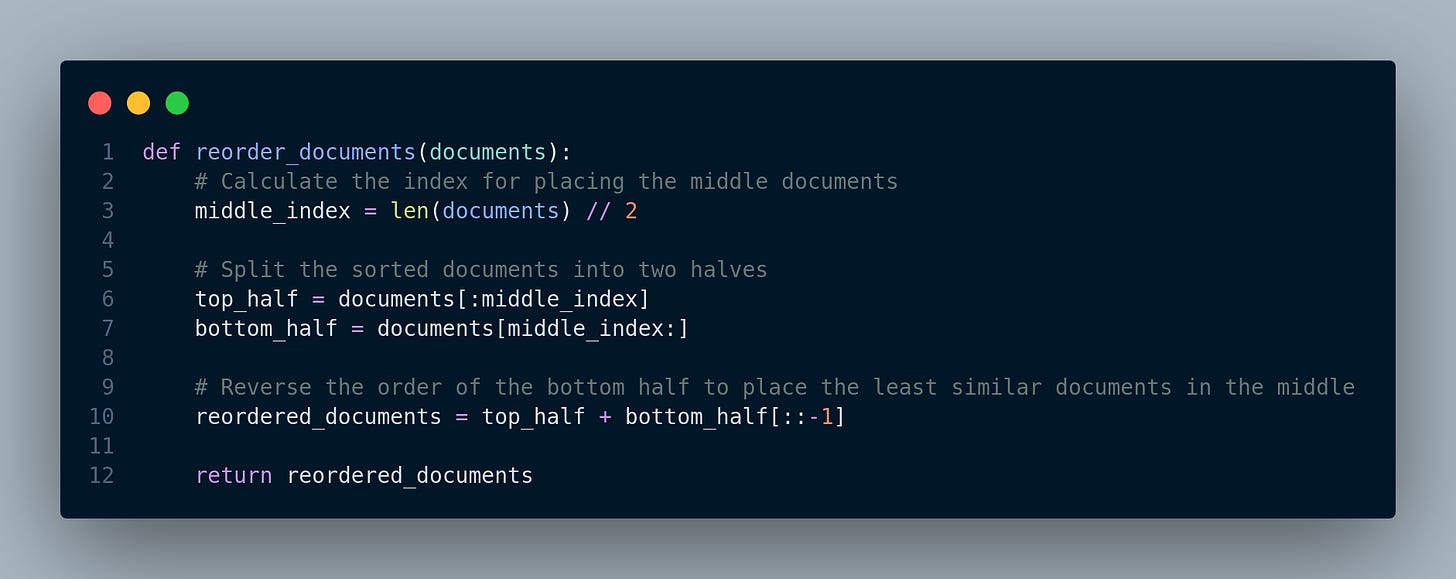

The most common approach since last year involves rearranging the retrieved documents, prioritizing the placement of the most similar documents to the query at the top, the less similar ones at the bottom, and the least similar ones in the middle.

In addition to this established solution, there are some innovative ones:

Compression: LongLLMLingua: Bye-bye to Middle Loss and Save on Your RAG Costs via Prompt Compression. Read More.

Majority Vote: Overcome Lost In Middle Phenomenon In RAG Using LongContextRetriver Read More.

How to implement?

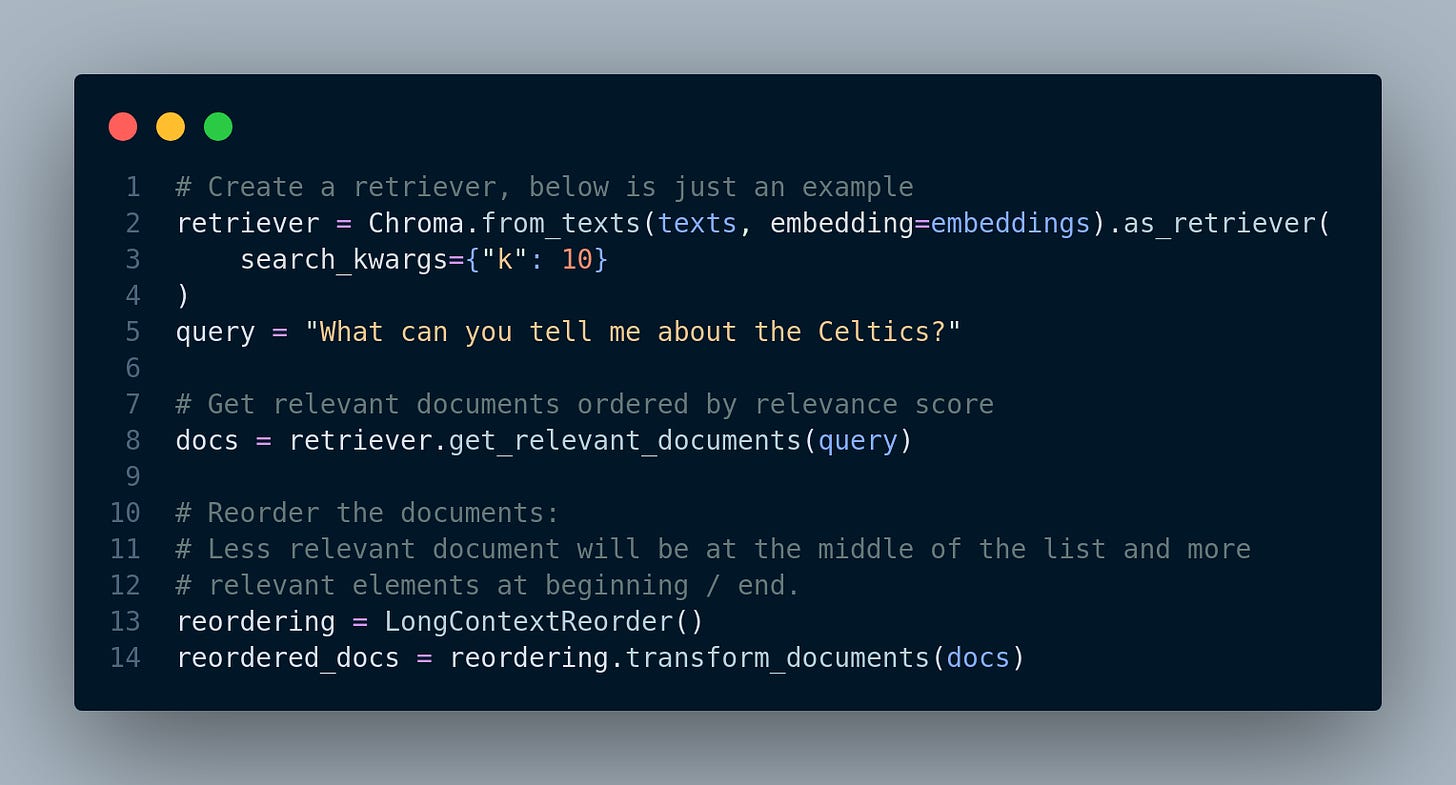

For implementation, we need a function to get the retrieved documents from the retriever and reorder them, i.e. place most relevant documents at the beginning and end.

LangChain implementation with LongContextReorder:

For more discussion on this topic…

Subscribe to Our YouTube Channel!

We are kicking off our YouTube channel in the new year, and we invite you on board as we walk you through some of these intricacies about AI, fueled by the feedback from our readers, friends and colleagues!

We want to make our channel about AI for everyone. Similar to this newsletter, we’ll talk about new AI products, the latest trends, the nitty-gritty engineering stuff, career insights for AI enthusiasts, and, of course, one of our favorite topics – the entrepreneurial side of AI - 🥳

we're here to show you how you can ride the AI wave and be your own entrepreneur using the cool tools available in the market.

🛠️✨ Happy practicing and happy building! 🚀🌟

Thanks for reading our newsletter. You can follow us here: Angelina Linkedin or Twitter and Mehdi Linkedin or Twitter.

Source of images/quotes:

🔍 Paper: Lost in the Middle: How Language Models Use Long Contexts: https://arxiv.org/pdf/2307.03172.pdf

🖼️ Blogposts:

Overcome Lost In Middle Phenomenon In RAG Using LongContextRetriver: https://medium.aiplanet.com/overcome-...

LongLLMLingua: Bye-bye to Middle Loss and Save on Your RAG Costs via Prompt Compression: https://blog.llamaindex.ai/longllmlin...

AI Research Highlights In 3 Sentences Or Less (June-July 2023)

📚 Also if you'd like to learn more about RAG systems, check out our book on the RAG system:

📬 Don't miss out on the latest updates - Subscribe to our newsletter:

Tweet by Llamaindex: https://twitter.com/llama_index/status/1705009024050270456