When evidence is thin... (how to think, not what to think).

'Doing' policy and practice in 'governance' (and accountability, conflict, inclusion, humanitarian etc) often means making decisions at pace, despite evidence being thin. This note tries to help.

Why this note for policy people and practitioners?

This note started during COVID to support DFID’s governance cadre to think through use of evidence (particularly social and political research) during the crisis. It was also used by other specialists working in isolation and at pace on the ‘evidence thin’ frontiers of policy and practice. It also offers some advice for researchers who seek ‘policy traction’.

I tailored versions of the note for other agencies with similar challenges: when you are an over-worked specialist/adviser, how do you keep your policy and practice at least ‘evidence informed’. I have tried to make this version as generic as possible.

Feedback very welcome.

So, who is responsible for evidence? (Yup. You.)

The place of evidence has been debated since the birth of the ‘governance specialist’. We have done great things without evidence, using gut feeling, deep experience, and buckle-swash. Or so our legends tell us….

So why bother with evidence? A starting point for the place of evidence for all UK civil servants is the ‘Civil Service Code’, which outlines core values: Honesty. Integrity. Impartiality. Objectivity1.

Q: Can you ensure integrity, impartiality, objectivity without the use of evidence?

I’ll come back to this later in the note, but I think it’s an important starting point. This is why I cared so much about evidence in government – not just because it will hopefully lead to greater impact, but because it’s at the heart of the core values of public servants.

If you work for a different government, or any other organisation (multi-lateral, NGO), it is worth thinking about this – do you have a similar code that is or could be a foundation for your use of evidence?

How can I find the right evidence in a hurry?

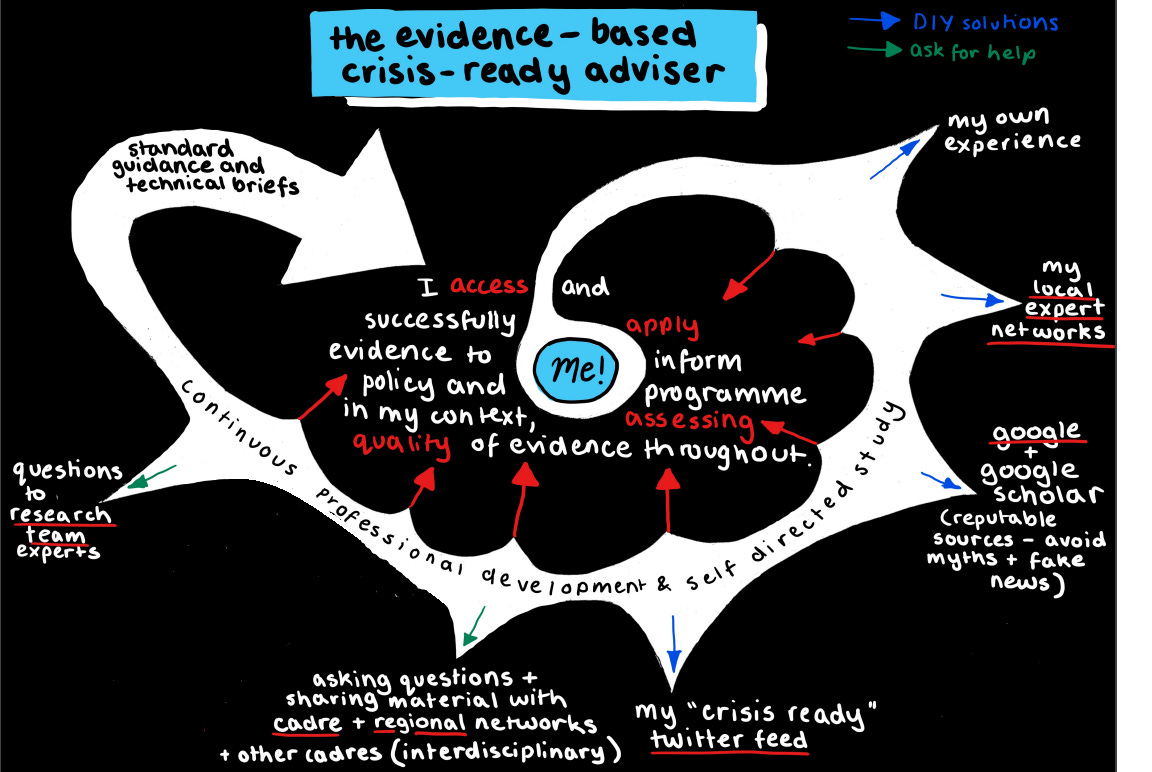

In the heat of policy and practice, we rarely have the luxury of engaging with research and evidence at the pace we’d like to. Usually we’re working under pressure and with competing demands. This note offers some pointers about ‘working smarter’. There are probably formal and definitely informal systems to help you find the evidence that you need, but the system starts with you. This diagram is a Covid lockdown relic (as usual I dragooned my daughter to make it for me).

The tools in the bottom left corner of the loop are specific to DFID (at the time) but your organisation may have similar support in place. But the others are all open and generic and the most important bit is the Me! in the middle. Many researchers and evidence-into-policy experts love helping people find evidence, but we need them to have had a ‘structured google’ first. We don’t google for you, basically.

Use of evidence is an ongoing workout – not just for a new investment or programme. For those who like their metaphors sporty:

Evidence-based advisers need to train between the big games, and can’t expect to be match fit without putting in the effort)(I don’t!).

What’s at stake if we are too busy for evidence?

We all feel that we’re too busy to read research, but a lot is at stake – money, failed impact, unintended consequences, reputation. If we don’t keep up to date with evidence in our field, and if we then keep on doing the same old things unaware that new evidence suggests that they don’t work, we can’t do our jobs as well as we need to.

Worst case? We can do actual harm.

All advisers and technical experts (should) know their key resources and services, and many of these are public – K4D and GSDRC, and wider resources such as World Bank and Google Scholar. In olden times Twitter could be a great resource for staying on top of latest research (though it could take time away from actually reading).

If your organisation has a research department, do they have all the answers?

Some, but not all, development organisations fund their own research, often driven by that organisation’s priorities. The research funded, the kind that leads the field when it comes to original concepts and insights, new methods and data, typically takes 3 to 5 years to complete, and it cannot cover every theme, sub theme, and country of interest. All of this research should be a public good (published and open access), as well as tackling priority needs.

Any organisation working in ‘development’ or public policy that also funds research is ideally placed to ensure that the research is ‘relevant’ by engaging with policy and practitioners from start to end of the research cycle, and also ensure that all research produced is rigorous, timely, and freely available.

Nose to tail policy engagement

In DFID’s Anti Corruption Evidence (ACE) research programme we coined the phrase ‘nose to tail policy engagement’ – meaning that the researchers aimed to engage with policy makers from the very start to the very end of the research cycle. This helped maximise the chances that research was relevant, taken up, and used.

There were also institutional incentives to demonstrate credible ‘pathways to impact’ - not for every piece of research, but for at least a few sub-projects within the large and diverse portfolio.

However, any ‘evidence base’ is much bigger than your organisation’s own research, and we should not just ‘eat our own’. We have a natural tendency to know our own research best, and to promote our own known researchers. For me, this can sometimes lead to an evidence base that’s predominantly UK-led and anglophone, no matter how hard I/we work to avoid this. I try and be aware of my ‘priors’ and biases and consciously reduce their effect.

There are benefits of starting with your own organisation’s research, such as geographical and thematic fit, as well as proximity and access to researchers. But there are risks, such as group think and a geographical skew that may lag behind rapidly changing global priorities.

Just help me answer the big questions!

What can research nerds or ‘evidence intermediaries’ help with?

Evidence is out there, and anyone can find it. But there is some ‘art’ in knowing the evidence in a field, and assembling or curating available research to try to inform new policy or operations. This is the kind of service that experts provide. Getting the best out of this support requires some preparation from you – the user. There are a lot of advisors, policy people and practitioners out there, but far fewer supporting experts or ‘evidence intermediaries’, and knowing how best to draw on people like me as a resource helps us all.

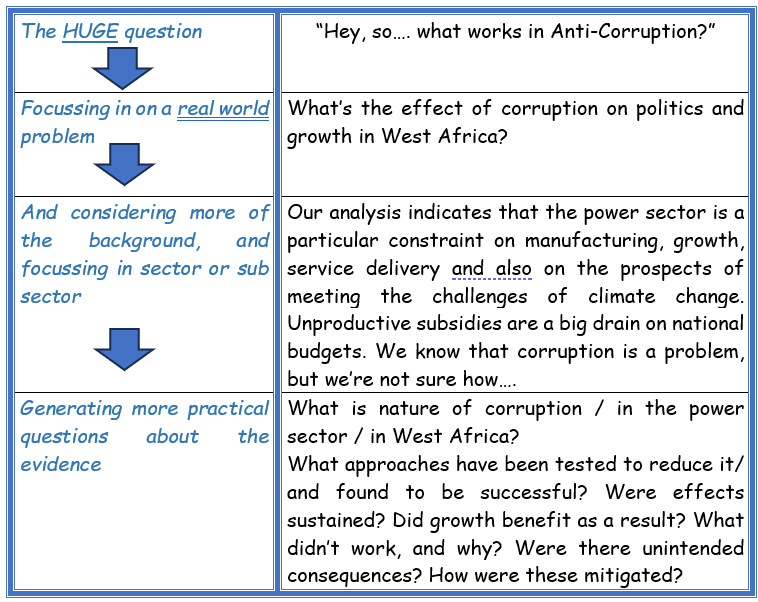

I often get asked very broad questions like ‘what is the latest evidence on tackling corruption2?’ The person asking is usually more important than me, so I try and be polite, and just give them a few ‘verbal bullet points’ of what I have been excited about that week. Of course, they would rather have a silver bullet (Some are really saying ‘I know that there is no silver bullet but what is the silver bullet?’).

However, their question is a bit like asking ‘what is the evidence on tackling diabetes?’ The answer to questions like this is always, ‘It depends’.

If the ‘evidence base’ is the mass of available research, and if each piece of research tackles one or a few specific questions, then the potential universe of evidence is vast. The actual stock of published, useable research may be smaller, but it’s also pretty large.

Related to this, any public policy (or business case, investment, or programme) to tackle corruption (or diabetes) will be complex and selective, not responding to every bit of research available, and will also have to manage contradictory findings and context-specific factors. It will focus on a sub-set of available evidence, choosing a few areas of promising intervention, and examine evidence for critical steps (e.g. causal path, or theory of change) within these. This is the territory of the ‘evidence informed’ – there is a quite a bit of human mediation, or curation, involved.

For diabetes, for example, if the real starting point is prevention, then within this there will be huge amounts of evidence on a range of specifics - genetic factors, diet, exercise, as well as what these look like within a particular context (national, socio-economic, gender, ethnicity, perhaps even political economy etc).

Any big governance question is almost certainly too broad to be useful. The more specific a question or set of questions is, the more help existing evidence is likely to provide. Here’s an example of a real life ‘work through’ (try it on your priorities):

What you need to know about the ‘state of evidence’

Governance science or Governance art?

“Governance research” may seem like a relatively young field, but it incorporates well-established fields and, like any field, requires filtering of research for quality. Anyone who has been involved in an ‘evidence gap map’ or a systematic review will have experienced this. The apparent depth of evidence quickly thins out when we filter for relevance and for (any definition of) quality. Sometimes there may be a lot of evidence on a particular subject but only from contexts that may not be easily generalisable. It may be that evidence is cross-national and claims to be generalisable, but contextual/political analysis tells us otherwise. This means we spend a lot of time not just searching for evidence but also thinking about whether it can translate in our own work – and suitable evidence may look thin.

So, does thin evidence let governance off the hook? If there’s isn’t much research, does this mean that governance advice is more art than science? More gut than brain? No. It really doesn’t.

Governance work can be seen as a dynamic balance between ‘know how’ (professional competence and judgement); ‘know who’ (networks, politics); and ‘evidence’ (independent research). Depending too much on any one of these is risky, as is letting them get stale. This also is not allowed – again, see those civil service values that bind us to principles like Honesty. Integrity. Impartiality. Objectivity.

Are there governance failures from doing without evidence? Absolutely. Think of technical projects that were a replication from another setting but did not realise intended impacts, sometimes blamed on a ‘lack of political will.’ Anti-Corruption commissions3. Delivery units. Decentralisation. Short route accountability. Police training (and much other training)….

What do researchers do for advisers, specialists, country teams and programmes?

I think that teams that take the time to engage with relevant researchers tend to see tangible benefits – expert inputs for business cases, advice, presentations that help keep knowledge updated, even a pathway to new careers (Ha! Me). UK researchers (in particular) are motivated by seeing their research have policy impact. If you use their research in a programme, they could write an impact case study that could help their department get extra core funding from government.

However, researchers are not consultants – they are busy, independent, play a key challenge function, and should not bend research to fit our needs. Research may be collaborative in process, but this can’t drive research outcomes or stray into ‘policy-based evidence making’.

Research programmes can also be interventions in themselves. World leading researchers provide evidence-based advice to donors, Governments, World Bank and others on policy in that country or even globally. As aid programme budgets reduce and we aim to get more impact from expertise and influence, research – and the experts and networks – could become even more attractive resources for your portfolio. However, there are risks – researchers should present the body of evidence, not push their own research. And research must be independent and objective – so be wary of practitioner or consultant researchers who describe their own success.

The value of research evidence is built on independence, transparency, rigor, and protecting academic reputation. NGOs and consultant reports – even those led by prominent thinkers - are a valuable complement to, but not a substitute for, good research. Use healthy scepticism when drawing on ‘evidence’ that was produced by anyone with a vested interest in a certain position, such as an activist NGO with a report on their priority problem or a think tank pushing an area where they hope to generate more funding. Exercise caution when considering their chosen strategy to tackle the problem. Ask questions about who funded the research and why. This is good practice no matter what. Good research will be open about potential conflicts of interest, but sometimes it’s up to us to figure this out.

How to find the right evidence for your new thing

Practical ways to find the ‘least bad thin evidence’ for your theme in your context

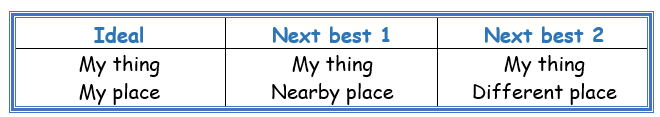

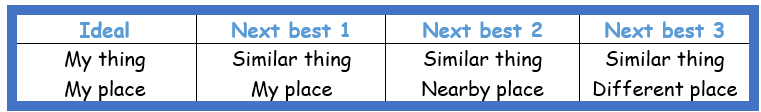

When searching for relevant evidence to inform work in any sector and specific context, compromise is usually required. We all hope to find a stock of recent, high quality research on our specific subject in our specific setting. But it rarely exists – particularly if quality matters. However, you can still find next best (or ‘least bad’) evidence to give insights. This – with caveats – is better than gut instinct or guessing.

Here are four practical examples of how you might find the best (least bad) evidence:

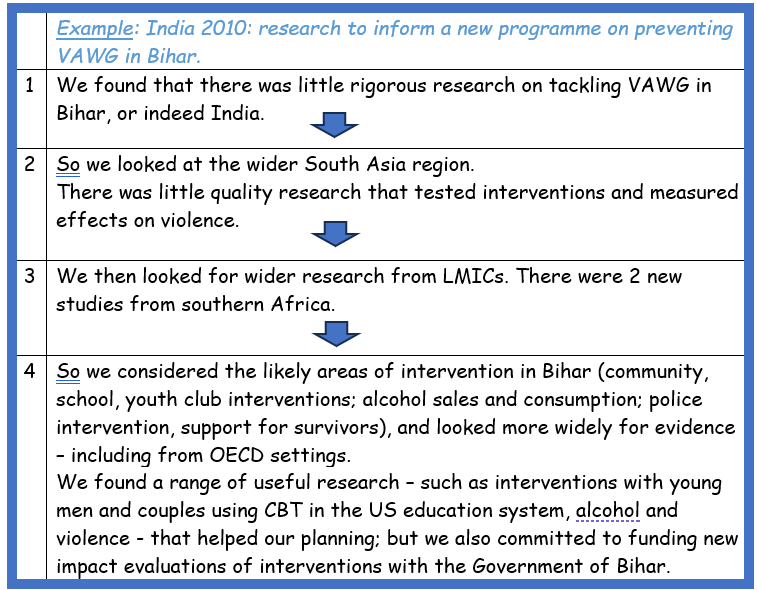

i) My thing, different place

Here’s a ‘work through’ from an earlier round of DFID strategy and budget planning. It helped cement a major investment - a programme and new research.

In crude terms, this process is -

ii) Similar thing, different places

Another dimension of this approach can work when we fail to find any research on ‘my thing’ anywhere, but with some careful lateral thinking, can still find relevant research to provide cautious insights.

An example is looking at how to tackle post-conflict and militia violence. High quality research is still quite rare, but there is some relevant research from the US on understanding and tackling school and gang violence – understanding masculinity, gender and social norms, and some evidence on interventions and their effectiveness. With due caution, insights can be drawn4, draft ‘theories of change’ sketched out, and potential interventions considered for adaptation, particularly if you build new research on ‘my thing, my place’ into your programme.

iii) Apply theory

Governance research and practice have a long history of theory. Even just from the 1980s on, we can think about New Public Management, tax and the social compact/contract, good governance and growth, corruption and principal-agent theory and so on. A criticism of FCDO research in the past was that it was too theory based and not always empirical enough. Over the last 10 years we have seen more and more empirical testing of theory in multiple settings. Even if the empiricists have not tested the theory in your country or sector, you can use these to seek insights. But beware evidence where you can’t see – even if only implicitly – the underlying theory.

iv) Evidence for the critical steps in your Theory of Change

Think through your likely programme or policy intervention and draft theory of change, and examine the evidence underpinning the critical causal pathways and assumptions on which the ToC hangs. For example, any programme that involves ‘capacity building’ and training can draw on a host of evidence about how to understand and measure training effectiveness, as well as what kind of impact – individual and organisational - is possible from training investment (spoilers: not pretty). Ditto for public procurement, last mile delivery, etc. Fudging the evidence underpinning ToCs is usually a good way to set up problems down the line.

Some really common evidence traps waiting to catch you…. (so be warned)

Trap 1: Governance specialists have a reputation for saying ‘it’s complicated!’. Nobody wants a governance and politics essay. But the opposite – short lists of ‘what works’ or ‘best buys’ - risk stripping out nuance, over-simplifying effectiveness, and the importance of context.

Repeated editing of evidence summaries exacerbates this. Word limits can kill nuance.

Trap 2: When claiming that ‘research demonstrates that x is effective’, think hard about effect sizes reported by researchers and what these mean in the real world. Medicine has a useful term – an effect may be statistically significant, but not ‘clinically significant’ – so not worth implementing, because the real world benefits on health are so small. We need to think in the same terms when it comes to ‘governance’ interventions.

Trap 3: Assume that in a real-world application, effect sizes will reduce. Application at scale is messier than in an impact evaluation. We may know that a discrete intervention works – such as CBT delivered by an NGO to reduce young men’s propensity to violence. But what does evidence tell us about how to scale this up, usually in government systems? ‘Scalability’ is a growing science in its own right, and governance can learn from sectors such as education.

Trap 4: Related to this is realistic time scale – when did researchers measure any change? If you use evidence of effectiveness, be realistic about when your programme will see results.

Trap 5: In the long term, two things may move in the same direction, but it does not mean that one (e.g. good governance) causes the other (e.g. growth). Even if a causal relationship does underpin a long-term trend, this does not mean that we can ‘instrumentalise’ this in a short donor funded project.

Trap 6: How old is that study you cite? If it is a decade or more old, be sure that it is really ‘seminal’, and that you know the research that has happened in the years since.

Thinking about ‘public policy’ versus ‘aid’ (and ‘diplomacy’)

Across the fields of governance, there are research themes on public policy that could be applied in any context (e.g. public procurement); and then there are questions that relate specifically to ‘aid’ and/or ‘diplomacy’ and the efforts of external actors (e.g. a donor funded project or policy initiative aimed at encouraging public procurement reform).

It’s easy to conflate ‘public policy’ with ‘aid’, but distinctions are often important. For example, there is new research on the importance of food and fuel price rises in catalysing ‘critical junctures’ for political change. However, the question of how or whether outside actors can get positively involved in such ‘junctures’ is a separate research question. More broadly, there is a range of governance-relevant research on the effectiveness of specific donor mechanisms such as payment for results, and budget support.

And finally…. Governance Politics and Technical

The final context point is an old one in governance – balancing the technical with the political. This can include in-house analysis, more formal approaches (PEA etc) and research – either directly on your theme and context, or insights from research in other settings. This can be sector specific (political corruption in power generation) or applying useful theory to your setting or sector (the political settlement approach). If anyone is writing ‘lack of political will’, they need to keep up with rolling governance evidence and commentary. Etc!.

Finally: those Codes again. Evidence and… Honesty, Integrity, Impartiality, Objectivity.

Hearty thanks to Heather Marquette and Emma Wind for their comments on the original version of this note.

‘Integrity’ is putting the obligations of public service above your own personal interests. ‘Honesty’ is being truthful and open. ‘Objectivity’ is basing your advice and decisions on rigorous analysis of the evidence. ‘Impartiality’ is acting solely according to the merits of the case and serving equally well governments of different political persuasions.

Or Freedom of Religion and Belief. Or Open Democracy. Or Taxation and Development. Or Fiscal Decentralisation. Or tackling gang violence. Or tackling school violence…

Anti-Corruption Commissions (ACCs) are a particular bugbear for my great friend, former partner in (serious organised) crime, and colleague, Heather Marquette. The evidence doesn’t show that ACCs don’t work, though this is almost always how it’s interpreted. The evidence shows that models originally developed in particular contexts don’t translate easily to others without significant adaptation. The real problem is that the traditional model would need huge amounts of resourcing and political protection to work in low-income contexts. There’s no reason not to consider other models though to pilot and test.

Policy insights go both ways – e.g. Chris Blattman has worked on tackling post conflict violence in Liberia and on gang violence in Chicago with similar interventions (e.g. Cognitive Behaviour Therapy, alternative pathways, role modelling etc).