Latest AI developments explained (OpenAI SORA, World models, Q*), Part III

How SORA works, how is it connected to world models? What about the Q* algorithm? How to make AI systems affordable for end users? Path towards personal LLM assistants, autonomous driving, etc.

In the second part of the article, we discussed how to improve capabilities of large language models and the first part explained the technology behind the latest video generating world models.

As you can see from this example, recent AI technology is capable of creating realistic avatars of people from a single photo. Moreover, realistic voice and realistic conversations (using prompted LLMs) can be also AI generated.

It is very likely that this technology will become available in a few years. I can imagine a product, offering people their digital clones. You as a CEO of a corporate company can stay home and play with your children while your digital clones will hold hundreds of one-to-one meetings with your employees. Another digital assistant will summarize important takeaways for you and your management team. The challenge is to produce realistic videos in real-time so the conversation seems natural. And imagine the cost and energy demand required to run digital clones for millions of people.

Even for chatbots, that are much less computationally expensive than video generative models, you have to respect technological limitations and find optimal performance/cost ratio to be able to run the service for millions of active users.

Making AI systems affordable

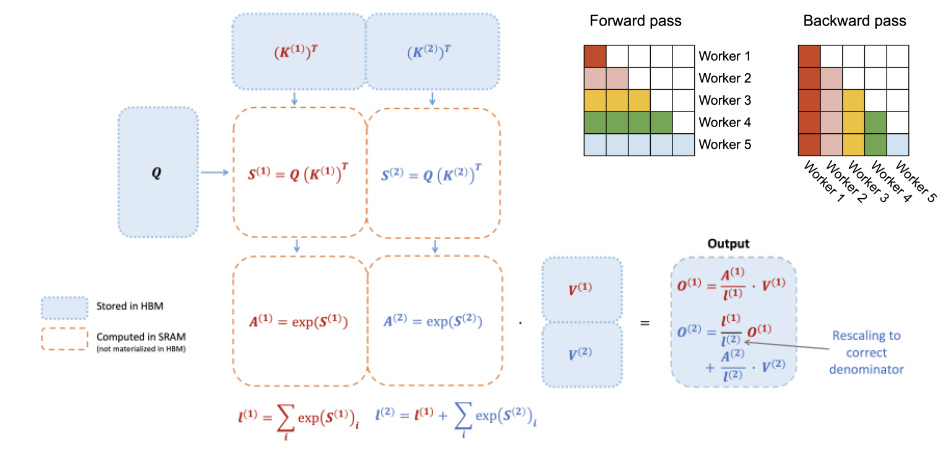

At the core of many nowadays AI systems are so called attention matrices storing information about patterns and relations in data. You can find attention not only in transformers, but also in many other AI models. These matrices are getting much bigger with increasing size of processed sequences. For understanding and reasoning on top of long videos or documents (Gemini 1.5 pro supports 1mil tokens) it is critical to speed up computation of attention.

Flash Attention is a popular technique that improves speed of training and inference of transformers and other models. Ring attention is a similar technique useful mostly for large scale computational infrastructures.

Instead of training attention matrices, you can train only their low rank approximation (see popular LoRA) and also combine it with storing information in low (4bits) precision (QLoRA). Such techniques help to reduce the size of LLMs to the extent that you can run them on your mobile phone. Furthermore, in some cases LoRA even improves generalization abilities and precision.

Researchers also already work on hardware acceleration of transformers. Such special hardware will be important especially for onboard computers for autonomous driving, because of high inference speeds and low consumption.

Real-world AI systems

Many AI systems are still not bringing enough value to users - the cost of running and maintaining them is higher than what users are willing to pay for it. But there are some exceptions, and we will discuss a few of them in more detail.

Autonomous Vehicles

Personalized Recommendations and Search

Personal Chatbots and Voice Assistants

Automated Customer Service

Predictive Analytics in Retail

Fraud Detection in Banking and Finance

Healthcare Diagnostics

Automated Trading Systems in Finance

Facial Recognition for Security and Authentication

Physical Robot Companions

Autonomous driving

Tesla is already offering AI for driving assistance to its customers worldwide. There are more variants of the product depending on AI capabilities.

Interestingly, the hardware component is the same for all cars, so customers can upgrade or downgrade depending on their budget and value it is bringing to them. Tesla is using mostly cameras and perhaps a radar in Hardware 4 (launched in 2023).

Robo-taxis are however not economical yet, as they need expensive extra hardware and remote maintenance.

In order to make these systems truly autonomous, further research is required. Consistently with what has been explained in the first and second part of this article,

planning capabilities and ad-hoc reasoning need to be improved,

as well as benchmarks. and their complexity.

Similarly as in other domains, future cars need to have a good world model and long term memory. Not just to remember shortcuts and driving tricks, but more importantly to communicate with its passengers.

Personalized Recommendations and Search

Recommender systems were one of the first AI technologies massively deployed to real-world online scenarios, because their runtime is relatively efficient compared to other AI systems. Where streaming services are funded from subscription fees, many online platforms and social networks need to pay these AI systems from advertising revenues or product profit margins.

These systems are also getting better, for example a user can ask in natural language and get personalized recommendations. But again, there is an additional cost involved in running a large language model and one needs to take it into account when designing and deploying such systems.

In future, recommender systems and personalized online search systems will also improve their capabilities towards better reasoning and interface with users, however it is most likely that they will be paid by users directly, acting on their behalf.

Personal Chatbots and Voice Assistants

As you can see, personal chatbots and voice assistants are also developing in the direction of having long term memory to know users better. There is a viable business model and growing competition.

At the same time, their reasoning capabilities are also upgraded regularly. I believe there is market for more expensive AI assistants with more computational, memory and data resources available. This will give rise of a new generation of specialized AI assistants startup companies.

Conclusion

In the first part I have explained the technology behind the latest AI world models. In the second part I focused on how to further improve capabilities of AI systems and this third part is about how to make AI systems affordable for real-world scenarios. I will be glad for your comments and feedback.

Additional resources:

ChatGPT: https://arxiv.org/pdf/2206.02336.pdf https://arxiv.org/pdf/2206.07699.pdf, https://arxiv.org/pdf/2201.11903.pdf

Model free RL: https://arxiv.org/pdf/2208.07860.pdf

Diffusion world models: https://arxiv.org/pdf/2402.03570.pdf

Algorithm discovery: https://www.nature.com/articles/s41586-023-06887-8, https://www.jmlr.org/papers/volume24/21-0449/21-0449.pdf