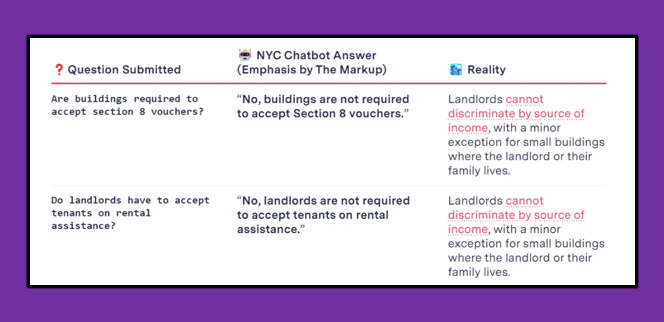

A recent Washington Post article reported on two chatbots that were both giving users incorrect tax advice. Another chatbot, released by the New York City government, gives users advice on renting out units and running businesses that breaks the law. What’s going on here?

The general case for a RAG (retrieval-augmented generation) chatbot is that you have a set of documents and you want a large language model (LLM) to answer questions specifically about those documents. Said documents might be internal or recent, meaning that no existing LLM would have been trained on them. Or maybe they were part of a training dataset, but you want a tool that's focused only on them and ignores everything else.

However, as these incidents have shown, releasing a functional RAG chatbot can pose significant challenges. This piece will break down how a RAG chatbot works and present some alternatives that might get you what you're looking for in terms of content or document visibility – with much less work and risk. And if you do want to use a RAG chatbot, I'll give you some ideas for requirements-gathering and user testing.

Two Parts of the RAG Chatbot

The two basic parts of the RAG chatbot are the search and the LLM. The search component takes the user query, searches your documents – which you've pre-processed – and returns chunks related to the query. These document chunks are then passed to the second part of the tool, the large language model. The LLM is given both the user prompt and the document chunks. Even if you have additional architecture on top of this, this is the core of what you're doing.

Each of these steps comes with its own set of choices. There are different ways you can pre-process and chunk your underlying documents. There are also different ways you can operationalize relatedness or similarity between texts to get the best matches. Typically, RAG chatbots use a concept called 'semantic similarity,' which relies on related words or concepts rather than exactly matching text to classify documents as similar. And then within the LLM step, a major choice is which LLM to use; plus there are choices about things like system prompts, temperature, and how to handle conversation history.

A lot of things can go wrong with these core steps. You can find the wrong document chunks – or there might be no relevant document chunks at all. Your LLM may synthesize them poorly, or ignore them entirely.

There are ways to fix each of these problems. But do you have to? In the next sections, I’ll talk about alternatives to RAG chatbots that make your content or documents accessible to varying degrees, possibly at greatly reduced time and cost.

The Frequently Asked Questions (FAQ) Page

The article on tax-advice chatbots giving incorrect tax guidance contained the following quote: “The company says it curated AI Tax Assist’s answers using the most common questions it received from clients in the prior year.”

If your goal is really just to handle a finite number of questions – as opposed to an unknown/potentially infinite set of prompts – there’s a much lower-tech way of delivering this: a frequently asked questions page.

A FAQ page lets you vet all of the text that’s being provided to users and focus on the highest-value questions. You can guarantee that every answer is accurate. And you can also create and add to it without developer time or compute.

The Searchable Document Repository, With or Without Summaries and Topics

What if you have a lot of documents and you want users to be able to access any information within them? This situation might call for a searchable document repository, which is easy to host on an internal or external website. SharePoint is a popular way to host documents, or you might use GitHub if you have technical users. When you leverage these websites' search functionality (or build your own), that works via string matching – like, you search for a word or text pattern, that exact word or text pattern is found, and the matching documents are returned.

But what if the documents are of different file types, or they’re unsearchable? Maybe they're Excel workbooks with a lot of numbers across multiple sheets, or scanned PDFs. Maybe they're very long, and so common search terms will bring up so many results as to render the search untenable for users.

Here are a few ways I might approach that problem:

Pull the text out of the documents. There are libraries for extracting text from spreadsheets and PDFs. There are tools specifically for scanned documents. And you’d have to do this part anyway if you wanted to process your data for a RAG chatbot.

Use an LLM’s summarization and classification capabilities. LLMs are really good at summarization, and there are even smaller, summarization-specific open source models that you can run on your own. You can also use LLMs to generate lists of topics covered in the documents, and you can make both the summarization and topic fields searchable. And you can add whatever specific fields your users want, like document type, when it was written, or even other fields you’ve had LLMs generate based on the content.

A searchable document repository can be expanded fairly easily as you get new documents, although you’ll need some kind of pipeline for document processing and tagging/summarization, if you’re using those capabilities. By adding in the LLM component, you’re introducing some risk, which you can mitigate by having a person read over the summaries and topics. You can see everything your users are going to see.

The Document Similarity Without the Chatbot

Another option is to just do the chunking and document similarity component of the RAG chatbot without the actual chatbot piece. This means that people could ask questions, and instead of getting an LLM-generated answer, they would just get the excerpts that your tool thinks are relevant to their query, along with links to the full documents.

Why might you do this? Like with the previous option, you have a lot of documents you want people to be able to search through, but maybe you also want them to be able to ask questions conversationally. Or maybe string matching isn’t working well and you think semantic similarity will be better.

This is a neat example. The National WWII Museum in New Orleans records interviews and then lets visitors ask questions of the interview subjects. The questions are translated to text and some kind of document similarity algorithm is used to return clips that are intended to be relevant to the question that was asked.

But in that case, why not go all the way and provide the chatbot component too? Because good chatbots are more expensive in terms of compute and labor and they provide users with a false sense of confidence. Like, in the above example, you wouldn’t want to generate new videos of interview subjects saying things they never said – you just want to make the things they actually said more accessible.

If you go this route, you should still test whether you're finding the correct documents that relate to user queries, and whether you're performing better than you would using a normal text search.

Finally, the RAG Chatbot

But let’s say you’ve considered the alternatives and you still want a RAG chatbot. Fine.

There’s a lot of fast-moving work being done on better architecture for RAG if you’re in that space. If you have to build this out, I think that’s worth exploring, but that’s not the focus of this piece.

What I will talk about is requirements-gathering and user testing. Basically, this comes down to: if you’re planning on releasing this to customers, you should treat it like you’d treat any other piece of software. Don’t invest time until you know specifically what you want it to do, kill it if it’s not getting there, and test it to make sure it does those things before you release it.

That means you should start with a requirements-gathering process where you talk to potential users or people who understand their needs, and you should figure out a couple of things:

What kinds of questions are they going to want to ask your chatbot? Maybe you can get that from an existing customer service process, or records on what people search your website for.

If this is going to fail, how should it fail? Options include refusing to answer in some cases, just suggesting documents but not providing text synthesizing them, or providing a possibly incorrect answer. The best approach depends on the use case. For critical domains like tax advice, prioritize accuracy over coverage. For less crucial areas, you might decide to be wrong sometimes, and that’s alright. For instance, if you’re providing book recommendations, it’s preferable to occasionally make a bad recommendation than to frequently refuse to respond.

Before you release your tool, you should test it to make sure that the way users are actually phrasing their questions is getting the responses you intended for them to get.

And then, after you release your tool, you can monitor how it’s doing. What new questions are they asking? How is it performing? You can do this manually, by reviewing log files, or you can automate it to some degree in a couple of different ways.

First, you can write questions that are representative of what users ask, then create performance tests on those types of questions. This can get you started, and you can also try rephrasing your questions and asking multiple times.

Second, you can use automated evaluations that work with any question people are asking, without knowing specifically what you want the answer to be. These evaluations look for things like completeness, or if the response has the components that were asked about, as well as whether the LLM was solely using information passed to it from the document chunks. There are various organizations providing tools in this space, including Athina AI and Aimon Rely – or you can write your own.

But you should do something. Because the trade-off for having a chatbot – the cost of that additional functionality – is higher variance and more uncertainty. If you have a FAQ page or a searchable document repository, you know everything your users are going to see. If you just have the document search component without the chatbot, you’re moving into somewhat more uncertain waters – but the worst outcome is that the documents they’re handed just aren’t very relevant to their question. But with the RAG chatbot, things could go pretty wrong if you give people bad information and they rely on it in some way – and there’s really no way to determine if that’s happening without testing and monitoring.

Conclusion

Making your internal documents more accessible, either internally or externally, is a good goal. A couple of times, I've run code to index documents on a shared drive to understand what information was available. And the concept of combining that with an LLM to actually synthesize material and answer questions – there’s a reason why this has been such a popular use case for LLMs.

However, if you’re going this route, you should anticipate putting some real work in, not only with solving technical challenges (and I haven’t even gone into scaling and cost issues here), but also with requirements gathering, monitoring, and thinking seriously about what error rate and type of errors you’re comfortable with.

But if you’re not up for that, you can get some of the functionality – making your documents more accessible – in a way that does put more of the burden on users, but also has a lower chance of going extremely wrong.

There's still a place for all levels of technology, from the low-tech FAQ and SharePoint page to solutions which use generative AI, but in ways that are static and verifiable, like summary generation, or which use the semantic similarity comparisons without the LLM part of RAG. What's appropriate really depends on your use case: what you're trying to deliver to your users, and what level of effort you want to put in.

Better a good FAQ page than a bad chatbot.

Great write up. Chatbots are often (usually?) more trouble than they are worth. I like that option of just the semantic document search without the LLM summarization.

What is weird is that regular Chat-GPT answers the NYC law questions better than the NYC chatbot. Whatever RAG solution they used, I don’t know how they made it worse than a generic LLM.