Superficial Significance

Shortcut to differentiating between statistical and clinical significance

In a recent post, I addressed the issue of failing to distinguish between relative and absolute risk when interpreting research results; similarly, misunderstanding the difference between statistical and clinical significance is a common blunder committed in today’s world of exuberant and often over exaggerated headlines. Check out my breakdown of a study I read during an ongoing exploration of sugar substitutes below for a tangible example of the statistical vs. clinical significance trap.

Bottom Line Up Front

Statistical significance describes whether or not an outcome is occurring by way of random chance.

Clinical significance describes whether or not an outcome is impactful in terms of real-world application.

When a given outcome is both statistically and clinically significant, the results are meaningful; however, statistical significance in the absence of clinical significance suggests that the difference displayed by the results is trivial.

Tails Never Fails

To start, let’s cover some background info. Due to conflicting results from observational studies and randomized-controlled trials (RCTs), Peters et al. conducted a 1 year study (split into two RCTs, I and II) in 2014-2016 to test whether drinks containing non-nutritive sweeteners (NNS) – like sucralose, aspartame, and saccharin – impact weight management in subjects reducing caloric intake for 12 weeks followed by 40 weeks of weight maintenance.

Subjects in the experimental group were told to consume at least 24 ounces of drinks with NNS per day – the equivalent of two cans of diet soda per day – while those in the control group were instructed to eliminate all drinks with NNS from their diet and consume at least 24 oz of water per day. Interestingly, subjects in both groups were not restricted from consuming NNS from sources other than drinks – meaning, for example, they could consume ice cream that contains NNS.

At its conclusion, the 52 week trial revealed multiple notable results, including the subjects in the NNS group both achieving and maintaining more weight loss as compared to the water group – though, the subjects in the water group also lost and kept off a considerable amount of weight. Though, for our purposes of exploring statistical and clinical significance, we’ll zero in on the statistically significant hunger questionnaire results.

Statistical significance is a term used to describe whether a set of results occurred by random chance or represents a legitimate difference. You can think about this in terms of flipping a coin, in that the probability calculation says 100 flips should result in 50 heads and 50 tails; however, anybody who has completed this experiment in grade school math class knows that the results typically don’t pan out so cleanly. Instead, we could see something like 63 tails and 37 heads due to sheer chance. If we were less privy to Probability 101, we might take these results and mistakenly conclude that some factor reliably increases the odds that a flipped coin will land on tails. When, in actuality, our small sample size – 100 flips – lead to inaccurate results that would otherwise be corrected for with a larger sample size – i.e. if we flipped the coin 10,000 times, then the outcome would likely be closer to 50% tails and 50% heads.

When assessing their study outcomes, researchers leverage statistical methods to address this chance conundrum and test whether differences between their subject groups are legitimate or artifacts of randomness. If a result returns as statistically significant – usually defined as producing a P-value less than 0.05, meaning that the researchers deem there is less than a 5% chance that the results occurred due to chance – then the intervention or treatment likely produced an actual difference in the groups’ outcomes.

Clinical significance, on the other hand, portrays the weight of the results in terms of actual use — their applicability in a clinical or application setting. After all, this is what we care about: whether the study results translate into meaningful applications in the real world.

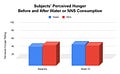

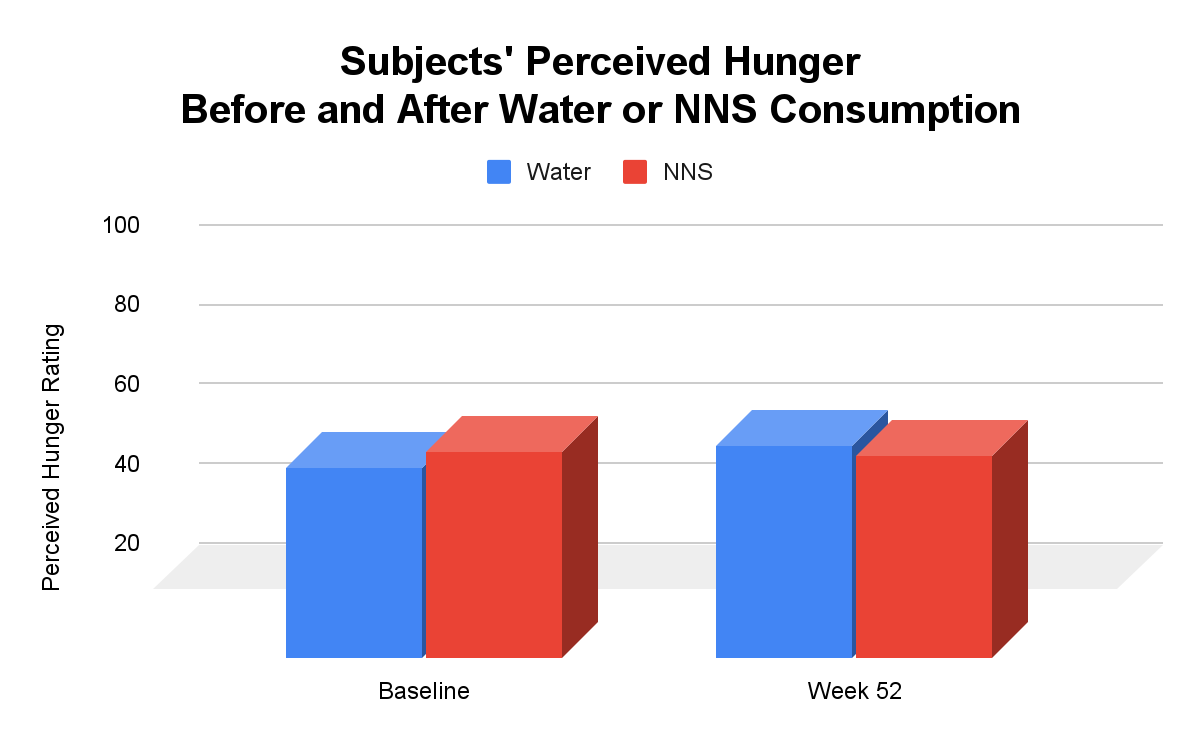

Returning to our study above, throughout the 52 weeks, the subjects completed several questionnaires addressing their perceived hunger on a scale from 1-100. From baseline to Week 52, the water group’s average response increased by 5.16 points; whereas, the NNS group’s average response decreased by 1.28 points, leading to a statistically significant 6.44 point difference between the two groups. Though mainstream headline authors would likely capitalize on this statistically significant result, exclaiming that NNS cured hunger, we can deduce a more precise conclusion if we consider this result’s clinical significance.

Remember, the questionnaire used here was on a scale of 1-100, which means that a difference of 6.44 translates to the differences between 5 and 5.6 on a scale from 1-10. How do those results appear now? Check out Figures 1 and 2 below for a visual of these data.

Sure, on a 1-year timeframe a difference of about half a point on a scale of 1-10 could produce significant differences in calorie consumption; however, it’s clear that such a difference is not colossal in a real-life application sense – as its statistically significance may have mislead a less statistically savvy reader to believe. And, to their credit, I think the authors’ remarks in the paper’s discussion section appropriately described their hunger questionnaire outcomes as potentially meaningful but not earth-shattering.

Now, in addition to relative vs. absolute risk, you have the concept of statistical vs. clinical significance in your repertoire for assessing inflated mainstream headlines and, more importantly, interpreting actual research data.