Are You Concerned About AI Plagiarism?

We unpack the ethical considerations of AI in art, and clarify possible solutions.

I came across an interesting post when I was scrolling through Facebook the other day. The post contained an accusation that AI software was nothing more than a tool to enable the creative world’s cardinal sin: plagiarism. I tend not to comment on Facebook posts on principle, especially to people with whom I am not truly acquainted, but it raised some questions.

My instinctive response to this Facebook post was “this is stupid.” There are indeed many issues with the post at face value—its emotional oversimplification, the fact that the author conflates plagiarism and copyright law, and the basic fact that labeling the work “in the style of Wes Anderson” makes it definitionally not plagiarism. But then the more I thought about it, the more I became present to the depth and nuance inherent in the underlying issue. What does it mean to have generative AI enter the playing field in art, music, literature, and academia? Why is it such a profound spiritual affront to be faced with the prospect of having our ideas appropriated by machines? Is AI really just an enabler of theft and “nothing else?” We all know that AI can’t be stopped; but can it be mitigated? How can we balance the need for individual recognition and fair compensation with the benefits of collective creativity and innovation?

What Even Is Plagiarism?

The word “plagiarism” has an interesting etymology. According to Wikipedia, the figurative use of the word plagiarus (literally “kidnapper”) first appeared in the 1st century by the Roman poet Martial, who complained that another poet “kidnapped his verses.” The derivative word plagiary began being introduced into the English language in the early 1600s to describe the act of literary theft.

The official definition of plagiarism varies depending on which academic handbook you’re looking at, but the general consensus is that plagiarism involves using someone else's work or ideas without appropriate attribution, essentially passing them off as one's own. Unlike copyright law, plagiarism is not a legal violation. While copyright law defines the rights of creators over their work and sets out the penalties for unauthorized use of that work, it doesn't explicitly define or govern plagiarism—although the two often overlap. Plagiarism is instead an ethical concept, the definition and penalties for which are usually set by individual institutions and based on societal norms.

That said, everybody instinctively knows when something has been ripped off, and we react accordingly.

The Wisdom of Repugnance

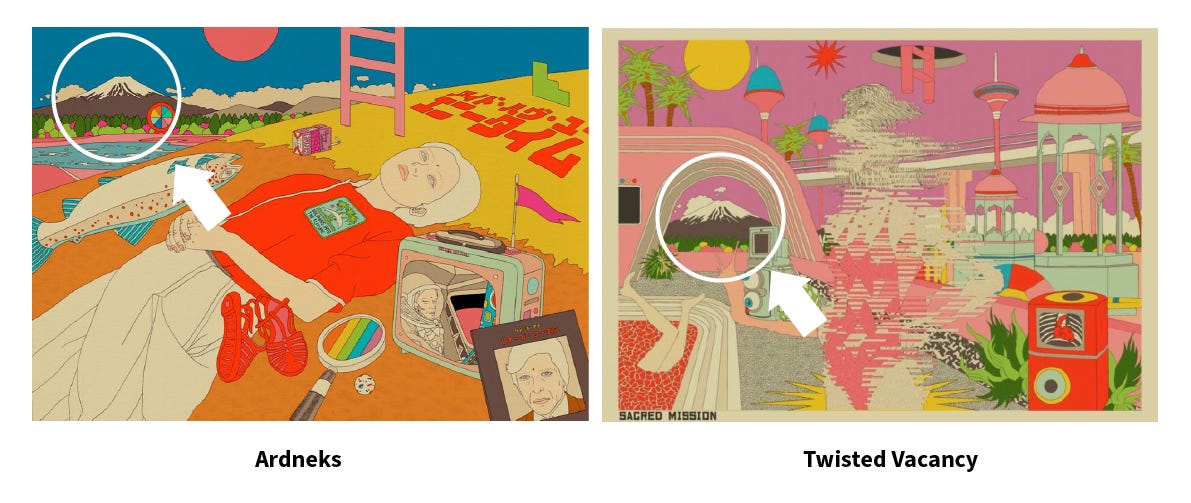

At the beginning of 2021, Indonesian illustrator Kendra Ahimsa, who goes by the name Ardneks, became inundated with online messages from fans reporting that an online persona known as Twisted Vacancy had been plagiarizing his work. When confronted, a spokesperson for Twisted Vacancy brushed off accusations, explaining that the crypto art collective creates its work from an image bank, and likening it to collage work. He explained, “We play with slashing, remixing. When we first developed it, we had dug enough (sic) about the Copyright Law itself. That is why we never use an entire artwork as reference for the bank. We put it in the bank, we spread and we break around 10% of the elements. There is an art of appropriation behind collages. Okay, you split 30% but try to compose differently. It falls into the art of appropriation itself.”

Ok, so legally, Twisted Vacancy might be off the hook. Copyright protects specific, tangible expressions of creativity, such as a painting, a song, or a novel. It does not extend to ideas, methods, or styles. An artist's general style, no matter how distinctive or recognizable, is not something that can be copyrighted under current copyright law. Still, everyone would agree in this case that Ardneks’s moral rights were violated. Even if you can’t precisely explain why, and even if copyright law holds that a certain amount of work may be appropriated for certain uses, most would agree this is about as plain a case of plagiarism as can be found on the internet today.

“Style biting” is one of the more serious ethical offenses one can take in the art industry. Developing a unique style involves significant personal investment on the part of the artist, not only in terms years worth of time and effort but also in terms of emotional energy. When people see creators appropriating another artist’s style down to the letter without credit or compensation, even if it’s not technically illegal, it can feel deeply unfair and even threatening. Artists rely on their intellectual property for their livelihood, and diluting the market with cheap copycats hurts artists and buyers alike, by devaluing artists’ work and making it more difficult for buyers to discern the original maker. It’s easy enough for a corporate entity to try and argue they were only “influenced by” a certain independent artist when their in-house design team completely rips off that artist’s work in order to sell fast fashion. Theft is a violation of human dignity because it violates the right of an individual artist to possess and enjoy the fruits of their labor. This is so fundamental a principle that the prohibition against theft is a tenet in most major world religions. I am sympathetic to artists’ fears.

So is AI really plagiarizing artists?

How AI Makes Its Art

According to ChatGPT, An AI image generator like Midjourney uses a form of machine learning known as a Generative Adversarial Network (GAN). A GAN consists of two parts, a Generator and a Discriminator, that are trained together. The Generator's job is to create images that look real. It starts by generating images randomly, and over time, as it receives feedback from the Discriminator, it learns to generate more and more realistic images. The training set that the generator uses to synthesize its images is gathered by scraping the internet for image references. This is perfectly ethical; you or I could do the same thing using a search engine. The AI then generates images based on written prompts inputted by the end user. The images may replicate a certain style based on patterns it has noticed within its training set. Even though it may bug people, this is also perfectly ethical.

The ambiguity is demonstrated in the way AI processes images. The way it does this is by using what’s called a “diffusion model.” A diffusion model works by simulating a random process, called a Markov chain, which transforms an original data distribution (such as a set of images) into a simple distribution (like random noise), and vice versa. Starting with an original image, small amounts of random noise are added at each step of the diffusion process. Over many steps, the image is gradually transformed until it becomes pure noise. The reverse process, called “denoising,” involves taking the noisy, random image and gradually reducing the noise, transforming it into a clear, realistic image. The aim of this process is to create a similar image that is not identical to the original, but has the same meaning. When it comes to copyrighted image source, some consider it to be ethically unclear whether the diffusion model is distinct enough from outright copying in order to constitute fair use. My instinct is to say that it is distinct enough, because although the analogy is imperfect, a diffusion model isn’t much different from the way human beings develop their own style based on years of influence from other sources. A spokesperson for Stability AI has argued that “Anyone that believes that this isn’t fair use does not understand the technology and misunderstands the law.” In any case, whatever ethical ambiguity still surrounds the diffusion model process will ultimately be cleared up in court.

The Illusion of Scarcity

In CS Lewis’s “The Screwtape Letters,” the titular demon writes a series of letters in which he counsels a protégé on how to secure the damnation of a human soul. In one of the letters, Screwtape discusses the concept of ownership, using the possessive pronoun “my.” He writes,

We produce this sense of ownership not only by pride but by confusion. We teach them not to notice the different senses of the possessive pronoun - the finely graded differences that run from "my boots" through "my dog", "my servant", "my wife", "my father", "my master" and "my country", to "my God". They can be taught to reduce all these senses to that of "my boots", the "my" of ownership. Even in the nursery a child can be taught to mean by "my Teddy-bear" not the old imagined recipient of affection to whom it stands in a special relation… but "the bear I can pull to pieces if I like".

Here, Screwtape explains the demons’ strategy of encouraging humans to assert ownership over things they don't truly own. The idea is to foster pride, selfishness, and a sense of entitlement. The flattening of all things under the appellation of “mine” encourages a scarcity mindset; it posits that there are only so many goods in the world, they are scarce, and insofar as somebody else has something, that represents “something that I cannot have.” In that thinking, you are not disposed to the thinking that another’s good is in fact good.

We ought not be so jealously guarded against our neighbors. The scarcity mindset threatens benevolence, which would build up a culture of flourishing. Envy is a capital vice, a habit of mind or heart which gives us an inclination toward that which damages the human soul. Envy works against charity, friendship, goodwill, and benevolence, made manifest in good deeds.

When it comes to art, this type of mindset is made manifest in the way we perceive ownership over our work. While artists may be obliged to share tips and techniques with other artists, it hits different when these techniques appear to be appropriated by machines. Many artists have sounded the alarm. Some have become upset to discover their work on haveibeentrained.com , a searchable database on the 5 billion images used to train popular AI art models, arguing that it “feels like a violation.” I am sympathetic to this. However, too many modern-day artists are being overly possessive over what they believe is “theirs.”

The truth is that no artist owns their art entirely. Art is an evolving conversation through time, an interplay of influences, ideas, and reinterpretations. Artists draw on collective cultural heritage, incorporate elements of existing art forms, and translate shared human experiences into their works. Even if an artist isn't consciously drawing from specific works, they're still working within established artistic traditions, genres, styles, and techniques, and they're likely influenced by the broader cultural and historical context in which they live. Show me an artist who can legitimately claim that their style is completely, totally, 100% their own invention and I may agree that they have a leg to stand on in the argument over whether their style is being “stolen” by AI. You may be surprised to find that such an artist doesn’t exist.

Art is a testament to collective creativity, and the value of an artwork extends far beyond the individual creator. The purpose of art is to seek and reflect truth and beauty. Art shapes our culture, informs our understanding of the world, and brings meaning to our lives. The moment an artwork is shared, it becomes a force multiplier, triggering in the viewer a panoply of emotions, thoughts, and conversations. When we see a painting, listen to a song, or watch a film, our personal interpretations and emotional responses contribute to the life of that piece.

While artists' rights to be credited and compensated for their work should be respected inside of the framework of potential lost livelihood, and precise ethical guidelines should be established and adhered to, there is also a strong argument for viewing the collective achievements of art as a shared human legacy. This legacy is important inasmuch as it forms a common thread connecting us all, providing inspiration, solace, and understanding across cultures and generations. A common thread of friendship thus increases our capacity for enjoyment of life, because the other is like unto me, and I am like unto them.

The Way Forward

The one constant that defines human progress throughout all of history is that we are always launching forward into the unimaginable. From the printing press to photography to cinema, television, and streaming services, breakthrough technologies that were once feared for their potential to have a negative impact on creative industries instead pushed creativity forward into unimaginable terrain. Once-feared technologies now happily coexist alongside traditional mediums and serve as valuable tools for today’s artists. How disruptive AI art will actually be is not yet clear, but either way, as they have done throughout time, artists will have to adapt.

On the one hand, I am not worried. I do not believe AI will be dominating the creative fields because AI is not capable of innovation; AI’s job is to synthesize what it already knows based on information it is given. AI generators know nothing whatsoever about the words and images they generate because they have no comprehension of the essence of art. They have no idea what it is like to be alive, and the experience of living is what human expression is all about.

On the other hand, there are some practical steps we can take to mitigate the risks posed by AI’s inevitable interference in creative fields.

Allow working artists to opt out of having their artwork included in AI training sets. It’s a matter of human dignity that we should have autonomy over our creative output, and we should be allowed the opportunity to consent—or not—to having our work emulated by AI.

Develop clear copyright policies for AI. I anticipate that as these issues are litigated in courts and make their way into copyright law, things like diffusion models will be categorized as fair use; but much like the way we currently govern plagiarism, legislative bodies should establish clear guidelines for defining the legal boundaries of ownership and attribution for AI-generated art. Perhaps it will be determined that AI should only be trained on public domain and licensed images. Perhaps these issues will be resolved similarly to how they were in days of Napster, whose complete disruption of the music industry led to a new approach to music licensing and transformed the way we consume music today. In case of Napster, it took 10 or 15 years of contentious litigation, but the music industry ultimately figured out how to stay afloat.

Promote transparency. Current industry best practices to avoid plagiarism should extend to areas in which AI is used, including citing sources. Works cited should clearly indicate when content has been generated or influenced by AI. As AI becomes more mainstream, citing sources may ultimately seem quaint, but for now, transparency can help prevent confusion and dishonesty about the origin of the artwork. At the end of the day, the best way to avoid confusion is to cultivate honesty as a core value.

Encourage mutual benevolence in appreciation of the common good, which is not diminished when it is dispersed to its members. Building on AI-generated ideas can empower us to be more creative. It allows us to create complex ideas out of simple ideas, or turn an unstructured idea into a structured idea. Rather than viewing AI as a threat, creative industries can view it as an opportunity for new forms of collaboration.

As technology pushes us into an unknown future, there is only one complete certainty: AI will not stop. There are myriad benefits that AI can potentially bring to the complex problems of the 21st century and it’s our responsibility to do what we can to encourage the benefits and mitigate the risks. We are entering a new world; our duty is to make it the best world we can.