Sex differences, lizardmen and twitter polls

Looking at differences in a gender skewed online audience is vulnerable to random unserious responses.

I’m on parental leave which (sadly) means I’ve been scrolling on twitter with one hand while (happily) holding my cute baby in the other. On the hellsite I’ve come across several polls that combine controversial questions or fringe opinions and questions about the sex/gender of the one taking the poll.

Now I know that these polls are just for fun and Aella specifically (

on substack)1 has stated repeatedly that she does not take them seriously. But why not? The sample size is pretty large, right? The boring answer is “a bunch of threats to generalizability”. Those who follow Aella on twitter are not a random sample of the population, people on twitter generally are not a random sample, women might follow her for different reasons than men, the polls get a lot of different engagement for reasons we can’t know, etc. The Algorithm works in mysterious ways.But the problem I thought about and want to illustrate bellow has to do with random responding. Often the only way to see the results of most of these polls is to vote for something. If someone’s more interested in that rather than contributing to the data-collection they could plausibly just click something randomly. I mean these twitter-polls are just for fun and no one is treating them like useful data, so where’s the harm, right?

Combine a tendency of random responding with the fact that these polls often ask about edgy and controversial opinions (see for example Richard Hanania’s recent poll on whether child prostitution is OK if everyone consents and gets paid enough) and with a overwhelmingly male audience and you get a recipe for misleading results.

Let’s pretend we take these polls seriously for a moment. The only reason to add a male/female option to these polls that I can think of is that you’re interested in the relative ratio of responses. I built a quick simulation in R to see how rates of random responses would inflate type-1 error rate. Quick primer: The type-1 error rate is usually meant to be 5%, given that the null-hypothesis is true. This means that if we use statistical tests that assume that the null-hypothesis is true, we’ll only accidentally make an error, that is wrongly reject a true null, 5% of the time (in the long run).

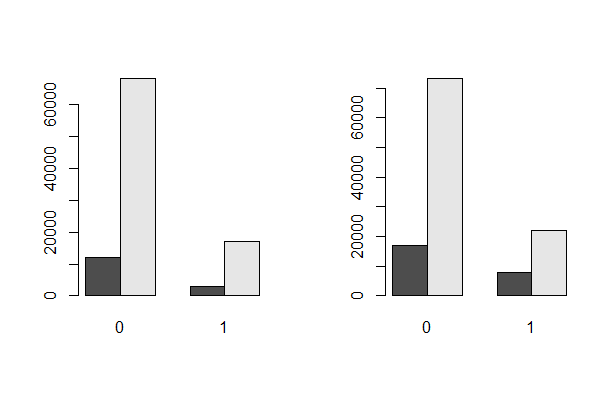

My simulation outputs a null model (on average) drawing samples from a specified gender-skew and a specified popularity of the opinion. The code then replaces a percentage of responses with purely random responses. Basically we turn a bar plot that looks like the left plot to one that look like the right plot.

Here I added 20% random responses. Note the larger shift in the ratio between black and gray at 1, compared to the shift in ratio at 0. Twenty percent random responses may seem excessive so let’s use a more conservative number for the rest of the sim. Scott Alexander has famously coined the expression the lizardman constant to represent the proportion of people who basically don’t take polling seriously at all, and has suggested that it’s 4%. He based this on the improbable number of people who said the believe Lizardmen secretly run the government. There is no way that many people actually believe that, thus it’s more reasonable to assume that some proportion of people tend to be unserious.

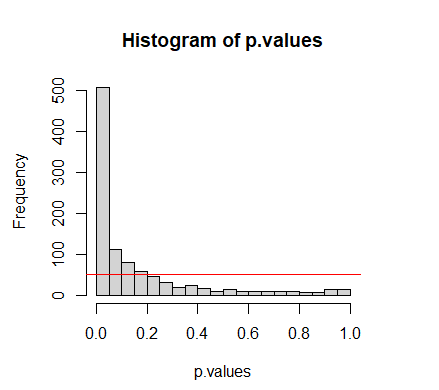

But first, let’s take a look at how it works without any added random responses. If I run my simulation a 1000 times and present the p-values (sorted from smallest to largest) without any random responses I get a plot like this:

As you can see the plot is approximately a straight line, since the p-value distribution is uniform under the null. I should expect around 50/1000 p-values bellow the red line to the left. The horisontal red line marks 0.05 on the y-axis.

Now, twitter in general skews male and based on a couple of polls I looked at Aella specifically gets about 85% self-identified males. Engagement with her polls vary wildly, but 5500 seems like a bar they usually clear. If I input these numbers in my sim2 and assume that the opinion she asks about is quite unpopular/controversial so that around 20% agree with it, the plot instead looks like this:

Note how the red line has moved towards the center of the graph. The blue line is left to mark the expected value of 50. The type-1 error rate has inflated almost 10 times. The chance of wrongly rejecting the null has risen from 5% to a coinflip!3

This problem of course gets worse the larger your gender skew is, the more unpopular opinion you’re asking about is, and the larger your sample is. For example, if we vary our sex/gender skew but keep the rest of the numbers the error inflation looks like this:

(You can play around with different numbers by copying the code at the end of the post.)4

What’s the point of twitter polls?

“No one is doing Null-Hypothesis-Significance-Testing on their twitter polls,” you might object.

Sure, but I also kind of wonder: What is the point in doing these then? I don’t think they’re just attempts to get engagement. Maybe that’s part of it for some but at the end of the day I think people make these polls because they’re interested in gender-differences. If you just wanted to make people think about edgy questions you wouldn’t add a gender option. Some type of interpretation is bound to be happening. If people viewed the results as more-or-less completely uninterpretable they wouldn’t be fun, either to make or engage with. The results are also public, which means interpretation is not just up to the poll-makers, it’s up to everyone who looks at the result.

Still one shouldn’t overstate the problem with random responding. One could possibly detect if there is a chronic random response problem: If women consistently like the controversial option more than men in your twitter polls (proportionally) this could be an explanation. On the other hand in cases where men and women show wildly different patterns, for example reverse patterns where the majority response for men is the minority response for women, this can’t be caused by some low rate of random responding at high sample sizes.

Unfortunately for twitter-poll-enjoyers, random responses are just one example of how the data-quality is likely to be poor, but it’s certainly not the most severe, and the more severe ones are bound to behave less predictably. The earlier mentioned Richard Hanania prostitution poll looks quite different depending on when the screenshot is taken. See for example here, where he complains that people did not respond to his poll well, and compare to the ratios it ended up with. When the sample size went from 650000 to 800000 there was a huge influx of people who claim to be female and respond yes. It should go without saying that this data is not trustworthy, and the problem is less related to random responding than the to mysterious dynamics of the twitter algorithm.

If this is all just silly fun, surely one would like to spread awareness around why data-sources such as these are problematic? My impression is that these polls are popular with self-identified rationalists, free thinkers, or heterodox journalists. The type of people that I would expect to groan at bad data-quality. Bad data is often seen as worse than no data (conventionally the type-1 error rate is set as lower than the type-2 error rate).5

I hope this post shifts assumptions about the interpretability of twitter polls ever so slightly.

I don’t mean this post as some sort of attack on Aella specifically. I feel a bit guilty since I’ve seen her exposed to some bad faith criticism of her more serious methodology, related to her non-twitter surveys.

One could of course just build a data-set with the exact expected values, but I personally think a stochastic simulation is much more fun. Simulating is a good habit in statistics, I’ve heard.

#feel free to copy the code into R. For some reason Substack adds an empty row between every line of code, but when I tried it it ran smoothly anyway.

sim.function <- function(n = 500, skew=0.85, popularity=0.20, add.random.responses = 0.10, p = TRUE){

sex <- rbinom(n,1,skew)

unpopular.opinion <- rbinom(n,1,popularity)

DF <- data.frame(sex,unpopular.opinion)

if(round(n*add.random.responses) > 0){

n.random.responses <- round(n*add.random.responses)

sex <- rbinom(n.random.responses,1,0.5)

unpopular.opinion <- rbinom(n.random.responses,1,0.5)

DF[1:round(n*add.random.responses),] <- data.frame(sex,unpopular.opinion)

}

if(p == TRUE){

chisq.test(DF$sex,DF$unpopular.opinion)$p.value

}else{

DF

}

}

simnr <- 1000

#without random responses

p.values <- replicate(simnr,sim.function(n = 5000, add.random.responses = 0))

plot(sort(p.values),main = "p-values", type = "l", ylab ="")

abline(h = 0.05, col = "red")

abline(v = simnr*0.05, col = "red")

table(p.values < 0.05)

hist(p.values, breaks = 20)

abline(h = simnr*0.05, col = "red")

#Aella engagement numbers and gender skew, with lizardman constant

p.values <- replicate(simnr,sim.function(n = 5500, skew = 0.85, popularity = 0.20, add.random.responses = 0.04))

error.rate <- round((table(p.values < 0.05)[2])/(simnr*0.05),1)

plot(sort(p.values),main = c(error.rate,"times inflated type-1 error rate"), type = "l", ylab = "p-values")

abline(v = table(p.values < 0.05)[2],col = "red")

abline(v = simnr*0.05, col = "blue")

abline(h = 0.05, col = "red")

axis(1, at = c(0, 50))

Although it is also valid to question why this is! Ideally both type-1 and type-2 error should be justified based on a principled consideration of different trade-offs. https://osf.io/preprints/psyarxiv/ts4r6/

I'm not sure if you're aware, but after Tumblr introduced polls, it didn't take long at all before it was fairly common practice to include a [see results] option or a nonsense word option to filter out lizardmen responses. I'm not sure how well it worked in practice, but there's a lot of polls where that option predominates (e.g one very popular post a couple weeks back surveying sex frequency where the virgin/celibate option got like 60% of the total vote)