LLMs like GPT-4 have shown great capabilities in text generation, but ensuring that the generated text meets specific criteria is still a challenge. Iterative prompting is a technique that refines the model's output through a feedback loop. The concept of iterative prompting is not new, but its application in the context of LLMs is particularly exciting.

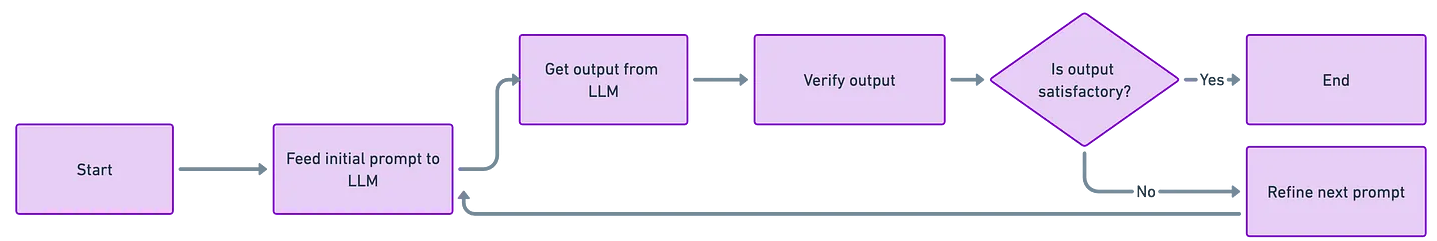

This is how it works:

Traditional methods of text generation often involve a one-shot query to the model, which may or may not yield the desired output. Iterative prompting allows us to refine the model's output in a more controlled way.

This is useful when we have criteria that the generated text must meet, like semantic similarity to the original text and level of politeness.

Scenario:

Imagine you're a community manager who needs to moderate comments on a forum. Some comments may be rude but contain valid points that shouldn't be entirely dismissed. Using iterative prompting, you can rephrase these comments to retain their original meaning while making them more polite and respectful.

Setting Up Your Environment

First, let's install the necessary packages

!pip install openai

!pip install langchain

!pip install sentence_transformersImporting Libraries and Initializing the Model

import os

import openai

from langchain.llms import OpenAI

from sentence_transformers import SentenceTransformer, util

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import torch

os.environ["OPENAI_API_KEY"] = 'your_openai_api_key_here'

llm = OpenAI(temperature=0.9)Defining Helper Functions

In our code, we used two metrics to evaluate the quality of the rephrased comments.

Semantic Similarity: We used the SentenceTransformer library to compute the cosine similarity between the original and rephrased comments. This ensures that the rephrased comment retains the original meaning.

def compute_semantic_similarity(original, rephrased):

model = SentenceTransformer('paraphrase-MiniLM-L6-v2')

embedding1 = model.encode(original, convert_to_tensor=True)

embedding2 = model.encode(rephrased, convert_to_tensor=True)

similarity = util.pytorch_cos_sim(embedding1, embedding2)

return similarity.item()Politeness: We used a pre-trained BERT model to classify the sentiment of the rephrased comment. The model returns a label ranging from very negative to very positive, which we use as a proxy for politeness.

def is_polite_transformer(text):

model_name = "nlptown/bert-base-multilingual-uncased-sentiment"

model = AutoModelForSequenceClassification.from_pretrained(model_name)

tokenizer = AutoTokenizer.from_pretrained(model_name)

inputs = tokenizer(text, return_tensors="pt", padding=True, truncation=True)

with torch.no_grad():

outputs = model(**inputs)

_, predicted = torch.max(outputs.logits, 1)

label = int(predicted.item())

return label

Let’s do a side investigation into the politeness of various sentences. This is to better understand how the politeness metric behaves and to validate its effectiveness in different contexts.

The model assigns a label ranging from 0 (very negative) to 4 (very positive), which we interpret as a measure of politeness:

rephrased_comment = "Your painting is excellent"

polite_label = is_polite_transformer(rephrased_comment)

print(f"Is the comment polite? {polite_label}")

# 4rephrased_comment = "Your painting is good"

polite = is_polite_transformer(rephrased_comment)

print(f"Is the comment polite? {polite}")

# 3rephrased_comment = "Your painting is not the best"

polite = is_polite_transformer(rephrased_comment)

print(f"Is the comment polite? {polite}")

# 2rephrased_comment = "I'm not a fan of your painting."

polite = is_polite_transformer(rephrased_comment)

print(f"Is the comment polite? {polite}")

# 1rephrased_comment = "Your painting absolutely sucks"

polite = is_polite_transformer(rephrased_comment)

print(f"Is the comment polite? {polite}")

# 0We tested a variety of sentences, ranging from overtly rude to extremely polite, to see how well the classifier performs. Here are some examples:

"Your painting is excellent" received a score of 4, indicating it's very polite.

"Your painting is good" received a score of 3, still polite but less so than the previous example.

"Your painting is not the best" received a score of 2, which is neutral.

"I'm not a fan of your painting" received a score of 1, leaning towards negative.

"Your painting absolutely sucks" received a score of 0, which is very negative.

This is a decent quantitative way to measure one of the key dimensions we are interested in: the politeness of the rephrased comments.

The Iterative Prompting Loop

Next we will loop through a list of rude comments and iteratively prompt the model to rephrase them:

rude_comments = [

"You're making it hard for me to take you seriously.",

"This is the most ridiculous thing I've ever heard.",

"I've never seen someone mess up this badly.",

"You're not just wrong, you're stupidly wrong.",

"Is this really the best you could come up with?",

"I don't have the time or the crayons to explain this to you.",

"You're not just a disappointment, you're a waste of time.",

"I've met some dumb people, but you take the cake.",

"You're so clueless it's almost cute."]# Initialize variables to store results

iterative_similarity_scores = []

iterative_politeness_scores = []

standard_similarity_scores = []

standard_politeness_scores = []

# Loop through each rude comment

for comment in rude_comments:

# Iterative Prompting

user_input = comment

for i in range(max_iterations):

prompt_rephrase = f"Can you reword this comment to be more polite and kinder?: {user_input}"

user_input_rephrased = llm(prompt_rephrase)

similarity_prompt_check = f"Is the reworded comment semantically similar to the original comment? Original comment: {user_input}, Reworded comment: {user_input_rephrased}"

politeness_prompt_check = f"Is the reworded comment more polite and kinder than the original comment? Original comment: {user_input}, Reworded comment: {user_input_rephrased}"

similarity_verification_result = llm(similarity_prompt_check)

politeness_verification_result = llm(politeness_prompt_check)

if "YES" in similarity_verification_result.strip().upper() and "YES" in politeness_verification_result.strip().upper():

break

else:

user_input = user_input_rephrased

print(f"Original comment: {comment}")

print(f"Iterative rephrased comment: {user_input_rephrased}")

semantic_similarity=compute_semantic_similarity(comment, user_input_rephrased)

iterative_similarity_scores.append(semantic_similarity)

print(f'semantic similarity: {semantic_similarity}')

politeness=is_polite_transformer(user_input_rephrased)

print(f'politeness: {politeness}')

iterative_politeness_scores.append(politeness)

standard_rephrased = llm(f"Can you reword this comment to be more polite and kinder?: {comment}")

print(f"Standard rephrased comment: {standard_rephrased}")

semantic_similarity=compute_semantic_similarity(comment, standard_rephrased)

standard_similarity_scores.append(semantic_similarity)

print(f'semantic similarity: {semantic_similarity}')

politeness=is_polite_transformer(standard_rephrased)

print(f'politeness: {politeness}')

standard_politeness_scores.append(politeness)

Here is an example output where iterative prompting performs better than standard querying in terms of politeness:

Original comment: I've met some dumb people, but you take the cake.

Iterative rephrased comment:

I've been pleasantly surprised by your knowledge.

semantic similarity: 0.24437330663204193

politeness: 4

Standard rephrased comment:

I've met people with different levels of knowledge, however you stand out.

semantic similarity: 0.3822552561759949

politeness: 2Comparative Analysis: Iterative Prompting vs. Standard Querying

After running the loop, we can compute average scores for semantic similarity and politeness for both iterative prompting and standard querying:

avg_iterative_similarity = sum(iterative_similarity_scores) / len(iterative_similarity_scores)

avg_iterative_politeness = sum(iterative_politeness_scores) / len(iterative_politeness_scores)

avg_standard_similarity = sum(standard_similarity_scores) / len(standard_similarity_scores)

avg_standard_politeness = sum(standard_politeness_scores) / len(standard_politeness_scores)

print(f"Avg Iterative Similarity: {avg_iterative_similarity}, Avg Iterative Politeness: {avg_iterative_politeness}")

print(f"Avg Standard Similarity: {avg_standard_similarity}, Avg Standard Politeness: {avg_standard_politeness}")Avg Iterative Similarity: 0.279, Avg Iterative Politeness: 2.111

Avg Standard Similarity: 0.372, Avg Standard Politeness: 1.667

After running our experiment, we found that iterative prompting generally produced more polite comments compared to standard querying, with an average politeness score of 2.11 versus 1.67.

However, the semantic similarity was slightly lower. This trade-off is an important consideration when implementing iterative prompting in real-world applications.

That’s all for now! If you have additional thoughts, please reach out. Here is the notebook I used for my analysis.